NVIDIA NeMo

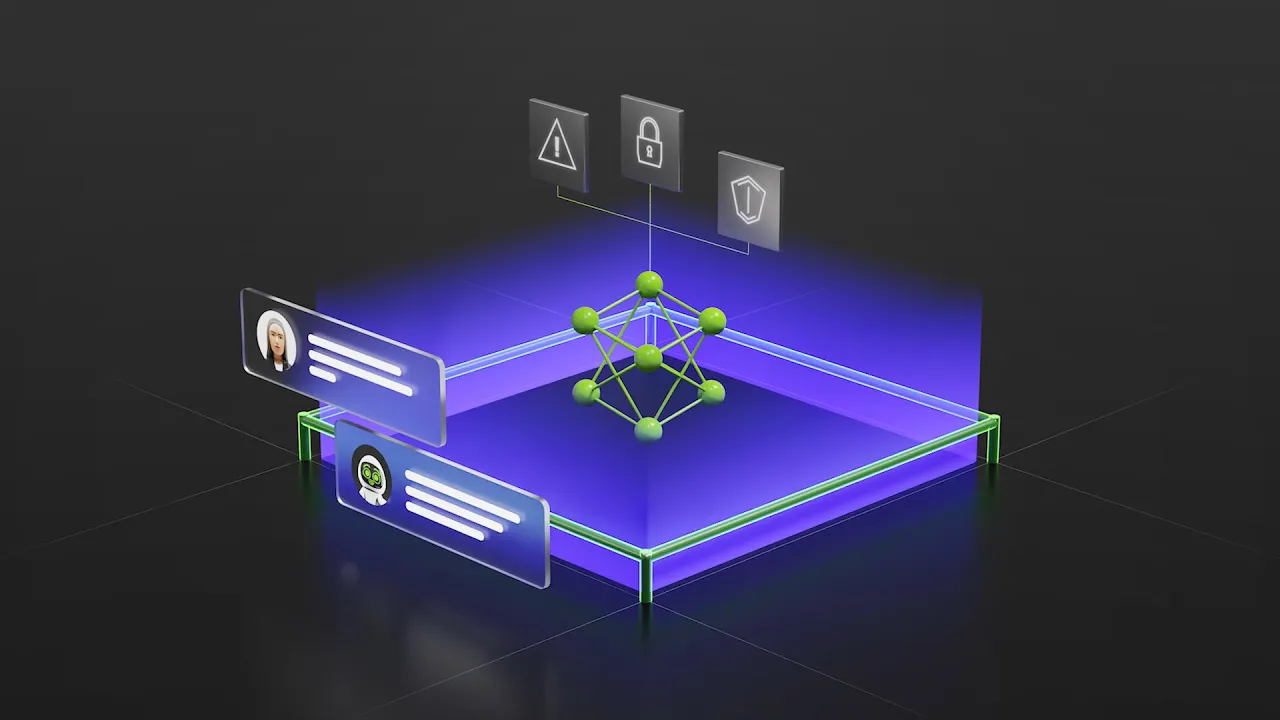

A comprehensive toolkit to build, monitor, and optimize AI agents across their lifecycle at enterprise scale.

Overview

What Is NVIDIA NeMo?

NVIDIA NeMo™ is a comprehensive toolkit for managing the AI agent lifecycle. It includes open libraries and microservices for data processing, data generation, model fine-tuning and evaluation, reinforcement learning, speech, safety, and agent observability. Use NeMo to customize NVIDIA Nemotron™ and other models to build production-grade, specialized agentic systems tailored to your domain needs and data.

It integrates with existing AI platforms and supports cloud, on-premises, and hybrid deployments.

Features

Tools for Managing the AI Agent Lifecycle

The AI agent lifecycle is an end-to-end process for developing and improving AI agents in production applications. NVIDIA NeMo provides tools that enable each step of this workflow, so enterprises can build specialized agents that are powerful, secure, and continuously learn.

| Build | |

|---|---|

| Prepare AI-ready data Process existing multimodal datasets into high-quality, AI-ready formats for development pipelines, and generate synthetic data to close critical data gaps. |

|

| Select the right model Pick or build models suited to the use case: selecting from open Nemotron models, other open or proprietary options, or training from scratch. Validate with evaluation runs, and fine-tune as needed. |

|

| Build your AI agent Profile and optimize agentic workflows across frameworks, with built-in performance analysis, bottleneck detection, evaluation-driven RL tuning, and interoperability with LangChain, LlamaIndex, and other agent ecosystems. |

|

| Deploy | |

| Deploy your agent with maximum performance Optimize your agent for production with high-throughput, low-latency inference, ensuring it can scale to meet enterprise demands and deliver fast, reliable responses. |

|

| Stay grounded in data and apply guardrails Use retrieval-augmented generation (RAG) to anchor agent responses in trusted knowledge while applying safety, compliance, and content moderation guardrails. |

|

| Optimize | |

| Monitor and collect feedback Track the agent's real-world interactions with users and other systems. Systematically evaluate its performance and accuracy, finding opportunities to continuously improve. |

|

| Continuously improve with data flywheels Use the feedback and data gathered from monitoring to create a data-driven flywheel, iteratively retraining the agent to continuously optimize and stay effective over time. |

|

Use Cases

How NeMo Is Being Used

See how NVIDIA NeMo supports industry use cases and jump-starts your AI development.

-

AI Agents

-

SDG for Agentic AI

-

AI Assistant

-

Enterprise Search

-

Content Generation

-

Humanoid Robot

AI Agents

AI agents are transforming customer service across sectors, helping companies enhance customer conversations, achieve high resolution rates, and improve human representative productivity. AI agents can handle predictive tasks, reason and problem-solve, be trained to understand industry-specific terms, and pull relevant information from an organization’s knowledge bases, wherever that data resides.

Synthetic Data Generation for Agentic AI

Specialized agentic systems need massive, high-quality datasets that are slow and expensive to collect from real-world sources. Synthetic data created through simulations or generative AI models can eliminate this bottleneck by creating unlimited training scenarios without privacy restrictions or quality issues. This enables faster development of reasoning LLMs, multi-step decision-makers, and multimodal AI assistants.

AI Assistant

Businesses are deploying AI assistants to efficiently address the queries of millions of customers and employees around the clock. Powered by customized NVIDIA NIM™ microservices for LLMs, RAG, and speech and translation AI, these AI teammates deliver immediate and accurate spoken responses, even in the presence of background noise, poor sound quality, and diverse dialects and accents.

Enterprise Search

Enterprises generate trillions of documents annually—including PDFs, reports, presentations, —each containing text, images, charts, and tables—spread across disconnected systems. AI-powered enterprise search transforms this scattered data into a unified knowledge base, enabling employees to instantly surface insights using natural language and driving faster decisions at lower cost.

Content Generation

Generative AI makes it possible to generate highly relevant, bespoke, and accurate content grounded in the domain expertise and proprietary IP of your enterprise.

Humanoid Robot

Humanoid robots are built to adapt quickly to existing human-centric urban and industrial work spaces, tackling tedious, repetitive, or physically demanding tasks. Their versatility has them in such varied locations as factory floors to healthcare facilities, where these robots are assisting humans and helping alleviate labor shortages with automation.

Apptronik

Benefits

Explore the Benefits of NVIDIA NeMo for Agentic AI

Comprehensive, Modular AI Agent Suite

Manage the full agent lifecycle from data curation and post-training to evaluation, guardrails, observability, and continuous optimization using an interoperable, enterprise-grade software suite.

Accelerate at Scale

Deploy and scale data flywheels using enterprise data, with GPU-accelerated training, inference, multi-node scaling, and cost-efficient optimization for high-throughput agent workloads.

Increased ROI

Build, customize, and deploy specialized agentic systems faster—shortening time to production and maximizing return on AI investments.

Secure and Production-Ready

Safeguard sensitive data, enforce policy and prompt guardrails, validate models, and continuously detect vulnerabilities. Deploy securely with enterprise-grade support and stability across cloud, data center, and edge with NVIDIA AI Enterprise.

Customer Stories

How Industry Leaders Are Driving Innovation With NeMo

Adopters

Leading Adopters Across All Industries

-

Customers

-

Partners

Resources

The Latest in NVIDIA NeMo Resources

-

Blogs

-

Sessions

-

Training

-

Videos

Next Steps

Ready to Get Started?

Use the right tools and technologies to take your agentic AI applications from development to production.

For Developers

Explore everything you need to start developing with NVIDIA NeMo, including the latest documentation, tutorials, technical blogs, and more.

Get in Touch

Talk to an NVIDIA product specialist about moving from pilot to production with the assurance of security, API stability, and support that comes with NVIDIA AI Enterprise.