Overview

What Is Conversational AI?

Conversational AI powers AI virtual assistants, digital humans, and chatbots—which are paving a path to personalized, natural, human-machine conversations. But real-time interactions demand speed and accuracy. With Nemotron Speech open models and the NVIDIA Riva library, developers can build responsive speech and translation capabilities and add natural voice interfaces to agentic AI applications.

Benefits

Explore the Benefits of Using Conversational AI

Agent Efficiency

Support contact center agents by transcribing customer conversations in real time, analyzing them, and providing recommendations to quickly resolve customer queries.

Digital and Global Accessibility

Enable people with hearing difficulties to consume audio content and individuals with speech impairments to express themselves in multiple languages.

24/7 Availability

Use chatbots and AI virtual assistants to resolve customer inquiries and provide valuable information outside of human agents' normal business hours.

Engaging Experiences

Offer engaging experiences with capabilities like live captioning, generating expressive synthetic voices, and understanding customer preferences.

Software

Explore Our Conversational AI Software

Use Cases

How Conversational AI Is Being Used

See how NVIDIA AI supports industry use cases, and jump-start your conversational AI development with curated examples.

-

Healthcare Agents

-

AI Virtual Assistant

-

Agent Assist

-

AI Translation

-

Physical AI

Healthcare Agents

Healthcare is reimagining patient interactions with high-fidelity, context-aware AI. By leveraging Nemotron models, organizations can now bridge the gap between clinical efficiency and patient experience.

Ambient voice agents autonomously generate structured clinical documentation, understanding context and intent. Voice agents handle high-volume patient touchpoints like scheduling and intake with dynamic reasoning for empathetic, personalized interactions.

AI Virtual Assistant

Businesses are deploying AI virtual assistants to efficiently address the queries of millions of customers and employees around the clock. Powered by customized NVIDIA Nemotron models including LLMs, RAG, and speech AI, these AI teammates deliver immediate and natural-sounding responses, even in the presence of background noise, poor sound quality, and diverse dialects and accents.

Agent Assist

Consumers expect contact center agents to resolve their issues quickly and efficiently. To help human agents deliver the best possible experiences, enterprises across diverse industries are deploying agent assist technology powered by NVIDIA Nemotron models for LLMs, RAG, and speech AI. This technology provides real-time facts and suggestions, helping agents respond more effectively and efficiently. The RAG Blueprint can enhance generative AI applications with quick information retrieval, infusing AI agents with instant knowledge collected from massive volumes of data.

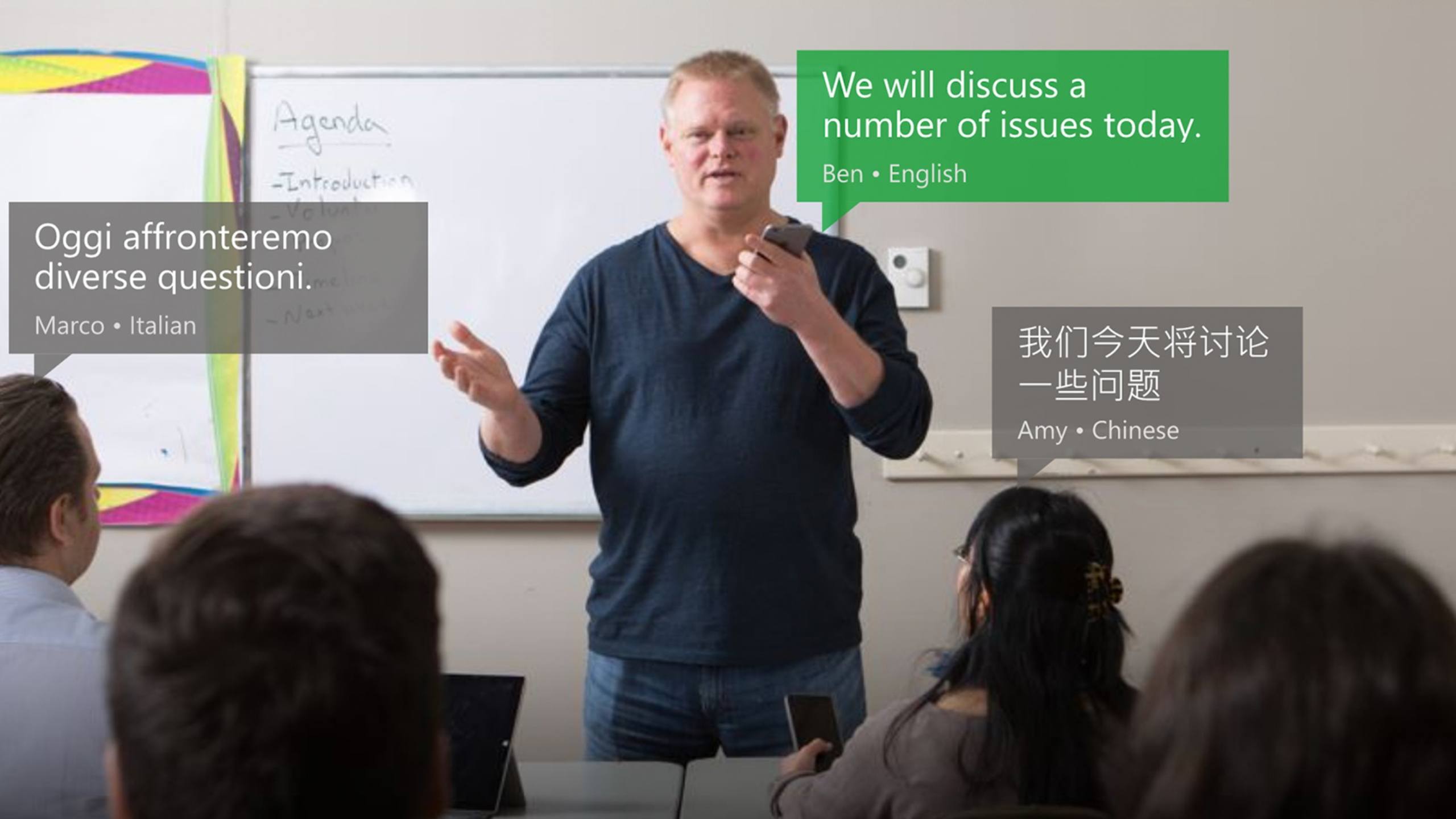

AI Translation

In the global economy, businesses hold millions of online meetings daily and serve customers with diverse linguistic backgrounds. Companies achieve accurate live captioning with real-time transcription and translation, accommodating worldwide accents and domain-specific vocabularies. They can use Nemotron models for summarization and insights, ensuring effective communication and smooth global interactions.

Physical AI

Service robots and voice-directed machinery are increasingly found in hospitals, manufacturing, airports, and retail stores worldwide. They aid frontline workers by handling daily repetitive tasks in restaurants and manufacturing facilities, assisting customers in locating store items, and supporting physicians and nurses in patient care. By deploying Nemotron Speech models directly at the edge, these robots provide near-instantaneous verbal interaction and maintain operational reliability, even in environments with limited connectivity.