Bidirectional Encoder Representations from Transformers (BERT) was developed by Google as a way to pre-train deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers. It was released under an open-source license in 2018. Google has described BERT as the “first deeply bidirectional, unsupervised language representation, pre-trained using only a plain text corpus” (Devlin et al. 2018).

Bidirectional models aren’t new to natural language processing (NLP). They involve looking at text sequences both from left to right and from right to left. BERT’s innovation was to learn bidirectional representations with transformers, which are a deep learning component that attends over an entire sequence in parallel in contrast to the sequential dependencies of RNNs. This enables much larger data sets to be analyzed and models to be trained more quickly. Transformers process words in relation to all the other words in a sentence at once rather than individually, using attention mechanisms to gather information about the relevant context of a word and encoding that context in a rich vector that represents it. The model learns how a given word’s meaning is derived from every other word in the segment.

Previous word embeddings, like that of GloVe and Word2vec, work without context to generate a representation for each word in the sequence. For example, the word “bat” would be represented the same way whether referring to a piece of sporting gear or a night-flying animal. ELMo introduced deep contextualized representations of each word based on the other words in the sentence using a bi-directional long short term memory (LSTM). Unlike BERT, however, ELMo considered the left-to-right and right-to-left paths independently instead of as a single unified view of the entire context.

Because the vast majority of BERTs parameters are dedicated to creating a high-quality contextualized word embedding, the framework is considered to be very suitable for transfer learning. By training BERT on self-supervised tasks (ones in which human annotations are not required) like language modeling, massive unlabeled datasets such as WikiText and BookCorpus can be used, which comprise more than 3.3 billion words. To learn some other task, like question-answering, the final layer can be replaced with something suitable for the task and fine-tuned.

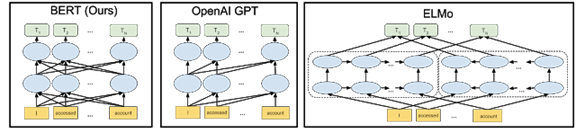

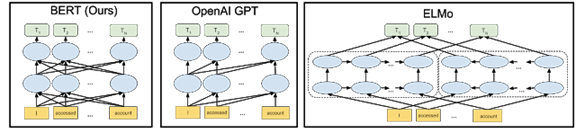

The arrows in the image below indicate the information flow from one layer to the next in three different NLP models.

Image source: Google AI Blog

BERT models are able to understand the nuances of expressions at a much finer level. For example, when processing the sequence “Bob needs some medicine from the pharmacy. His stomach is upset, so can you grab him some antacids?” BERT is better able to understand that “Bob,” “his”, and “him” are all the same person. Previously, the query “how to fill bob’s prescriptions” might fail to understand that the person being referenced in the second sentence is Bob. With the BERT model applied, it’s able to understand how all these connections relate.

Bi-directional training is tricky to implement because conditioning each word on both the previous and next words by default includes the word that’s being predicted in the multilayer model. BERT’s developers solved this problem by masking predicted words as well as other random words in the corpus. BERT also uses a simple training technique of trying to predict whether, given two sentences A and B, B is the antecedent of A or a random sentence.