Natural language processing is a technology that leverages computers and software to derive meaning from human language—written or spoken.

Natural language processing

What is natural language processing?

Natural language processing (NLP) is the application of AI to process and analyze text or voice data in order to understand, interpret, categorize, and/or derive insights from the content.

Included in NLP is natural language generation (NLG), which covers a computer’s ability to create human language text. Also included is natural language understanding (NLU), which takes text as input, understands context and intent, and generates an intelligent response.

Examples of NLP include email spam filters, spell checkers, grammar checkers, autocorrect, language translation, sentiment analysis, semantic search, and more. With the advent of new deep learning (DL) approaches based on transformer architecture, NLP techniques have undergone a revolution in performance and capabilities. Cutting-edge NLP models are now becoming the core of modern search engines, voice assistants, and chatbots. These applications are also becoming increasingly proficient in automating routine order taking, routing inquiries, and answering frequently asked questions.

Why NLP?

The applications of NLP are already substantial and expected to grow geometrically. By one research survey estimate, the global market for products and services related to natural language processing will grow from $3 billion in 2017 to $43 billion in 2025. That’s a stunning 14X growth that attests to the broad application of natural language processing solutions.

Further driving this growth is the realization that as little as 15% of the data within an organization is stored in corporate databases. The remainder is in texts, emails, meeting notes, phone transcripts, and so on. Natural language processing holds the promise of unlocking the business value hidden in all this data and making it as useful to business decision makers as data in storage.

How Does NLP Work?

Machine learning (ML) is the engine driving most natural language processing solutions today, and going forward. These systems use NLP algorithms to understand how words are used. They ingest everything from books to phrases to idioms, then NLP identifies patterns and relationships among words and phrases and thereby ‘learns’ to understand human language.

Typically in an NLP application, the input text is converted into word vectors (a mathematical representation of a word) using techniques such as word embedding. With this technique, each word in the sentence is translated into a set of numbers before being fed into a deep learning model, such as RNN, LSTM, or Transformer to understand context. The numbers change over time while the neural net trains itself, encoding unique properties such as the semantics and contextual information for each word. These DL models provide an appropriate output for a specific language task like next word prediction and text summarization, which are used to produce an output sequence.

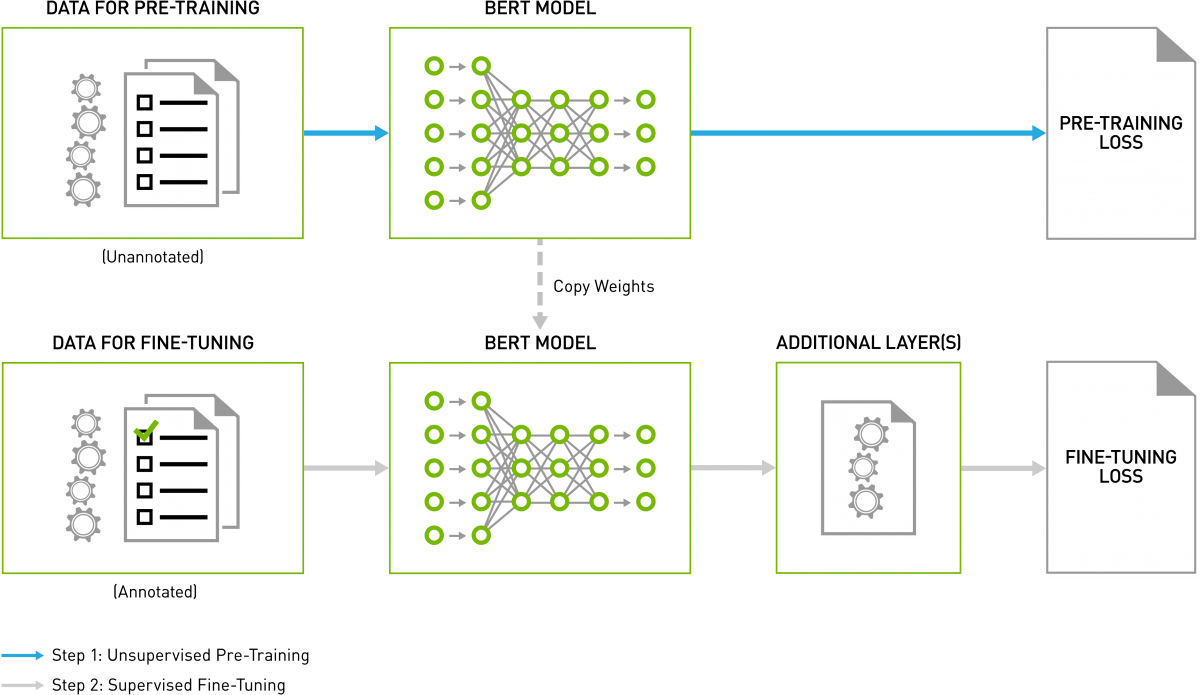

However, text encoding mechanisms like word-embedding can make it challenging to capture nuances. For instance, the bass fish and the bass player would have the same representation. When encoding a long passage, they can also lose the context gained at the beginning of the passage by the end. BERT (Bidirectional Encoder Representations from Transformers) is deeply bidirectional, and can understand and retain context better than other text encoding mechanisms. A key challenge with training language models is the lack of labeled data. BERT is trained on unsupervised tasks and generally uses unstructured datasets from books corpus, English Wikipedia, and more.

GPUs: Accelerating NLP

Getting computers to understand human languages, with all their nuances, and respond appropriately has long been a “holy grail” of AI researchers. But building systems with true natural language processing (NLP) capabilities was impossible before the arrival of modern AI techniques powered by accelerated computing.

A GPU is composed of hundreds of cores that can handle thousands of threads in parallel. GPUs have become the platform of choice to train deep learning models and perform inference because they can deliver 10X higher performance than CPU-only platforms.

A driver of NLP growth is recent and ongoing advancements and breakthroughs in natural language processing, not the least of which is the deployment of GPUs to crunch through increasingly massive and highly complex language models.

NLP Transformer-based deep learning models, such as BERT, don’t require sequential data to be processed in order, allowing for much more parallelization and reduced training time on GPUs than RNNs. The ability to use unsupervised learning methods, transfer learning with pre-trained models, and GPU acceleration has enabled widespread adoption of BERT in the industry.

GPU-enabled models can be rapidly trained and then optimized to reduce response times in voice-assisted applications from tenths of seconds to milliseconds. This makes such computer-aided interactions as close to ‘natural’ as possible.

Use Cases for NLP

Startups

Applications for natural language processing have exploded in the past decade as advances in recurrent neural networks powered by GPUs have offered better-performing AI. This has enabled startups to offer the likes of voice services, language tutors, and chatbots.

Healthcare

One of the difficulties facing health care is making it easily accessible. Calling your doctor’s office and waiting on hold is a common occurrence, and connecting with a claims representative can be equally difficult. The implementation of NLP to train chatbots is an emerging technology within healthcare to address the shortage of healthcare professionals and open the lines of communication with patients.

Another key healthcare application for NLP is in biomedical text mining—often referred to as BioNLP. Given the large volume of biological literature and the increasing rate of biomedical publications, natural language processing is a critical tool in extracting information within the studies published to advance knowledge in the biomedical field. This significantly aids drug discovery and disease diagnosis.

Financial Services

NLP is a critically important part of building better chatbots and AI assistants for financial service firms. Among the numerous language models used in NLP-based applications, BERT has emerged as a leader and language model for NLP with machine learning. Using AI, NVIDIA has recently broken records for speed in training BERT, which promises to help unlock the potential for billions of expected conversational AI services coming online in the coming years to operate with human-level comprehension. For example, by leveraging NLP, banks can assess the creditworthiness of clients with little or no credit history.

Retail

In addition to healthcare, Chatbot technology is also commonly used for retail applications to accurately analyze customer queries and generate responses or recommendations. This streamlines the customer journey and improves efficiencies in store operations. NLP is also used for text mining customer feedback and sentiment analysis.

NVIDIA GPUs Accelerating AI and NLP

With NVIDIA GPUs and CUDA-X AI™ libraries, massive, state-of-the-art language models can be rapidly trained and optimized to run inference in just a couple of milliseconds, or thousandths of a second. This is a major stride towards ending the trade-off between an AI model that’s fast versus one that’s large and complex.

NVIDIA's AI platform is the first to train BERT in less than an hour and complete AI inference in just over 2 milliseconds. The parallel processing capabilities and Tensor Core architecture of NVIDIA GPUs allow for higher throughput and scalability when working with complex language models—enabling record-setting performance for both the training and inference of BERT. This groundbreaking level of performance makes it possible for developers to use state-of-the-art language understanding for large-scale applications they can make available to hundreds of millions of consumers worldwide.

Early adopters of NVIDIA's performance advances include Microsoft and some of the world's most innovative startups. These organizations are harnessing NVIDIA's platform to develop highly intuitive, immediately responsive language-based services for their customers.

Next Steps

To learn more refer to:

- Snark Bite: Like an AI Could Ever Spot Sarcasm

- Mixed-Precision Training for NLP and Speech Recognition with OpenSeq2Seq

- Deep Learning NLP Interprets Words with Multiple Meanings

- Word Up: AI Writes New Chapter for Language Buffs

- Building State-of-the-Art Biomedical and Clinical NLP Models with BioMegatron

- NVIDIA blogs with tag: NLP

- Real-Time Natural Language Understanding with BERT Using TensorRT

- What Is Conversational AI? (Blog)

- NVIDIA Achieves Breakthroughs in Language Understanding to Enable Real-Time Conversational AI (News)

- The NVIDIA Conversational AI and Conversational AI SDK web page

- BERT QA in TensorFlow with NVIDIA GPUs (Blog)

- BERT Does Europe: AI Language Model Learns German, Swedish (Blog)

- NLP NeMo Code Samples

- Train BERT Model with PyTorch Code Sample

- Introducing NVIDIA Riva: A Framework for GPU-Accelerated Conversational AI Applications

- NVIDIA's AI advance: Natural language processing gets faster and better all the time

- NVIDIA Clocks World's Fastest BERT Training Time and Largest Transformer-Based Model, Paving Path For Advanced Conversational AI

- State-of-the-Art Language Modeling Using Megatron on the NVIDIA A100 GPU

- NVIDIA Elevates The Conversation For Natural Language Processing

- Building State-of-the-Art Biomedical and Clinical NLP Models with BioMegatron

- What Is Conversational AI?

- Money Maker: How AI Can Accelerate Analytics in Financial Markets

Find out about:

- The NVIDIA NGC™ catalog provides extensive software libraries at no cost, as well as tools for building high-performance computing environments that take full advantage of GPUs.

- Programmers and data scientists can take advantage of a broad suite of ML and analytics software libraries to significantly accelerate end-to-end data science pipelines entirely on GPUs. These libraries provide highly efficient, optimized implementations of algorithms that are routinely extended. NVIDIA CUDA-X AI software acceleration libraries use GPUs in ML to accelerate workflows and realize model optimizations. The CUDA parallel computing platform provides an API that developers can use to build tools that use GPUs for processing large blocks, which is a critical ML task.

- The NVIDIA Deep Learning Institute offers instructor-led, hands-on training on the fundamental tools and techniques for building Transformer-based natural language processing models for text classification tasks, such as categorizing documents.