Robot Learning

Train robot policies in simulation for real-world adaptability.

Workloads

Simulation / Modeling / Design

Robotics

Industries

Manufacturing

Healthcare and Life Sciences

Retail/ Consumer Packaged Goods

Smart Cities/Spaces

Business Goal

Innovation

Return on Investment

Products

NVIDIA Omniverse

NVIDIA Isaac

NVIDIA Jetson

Overview

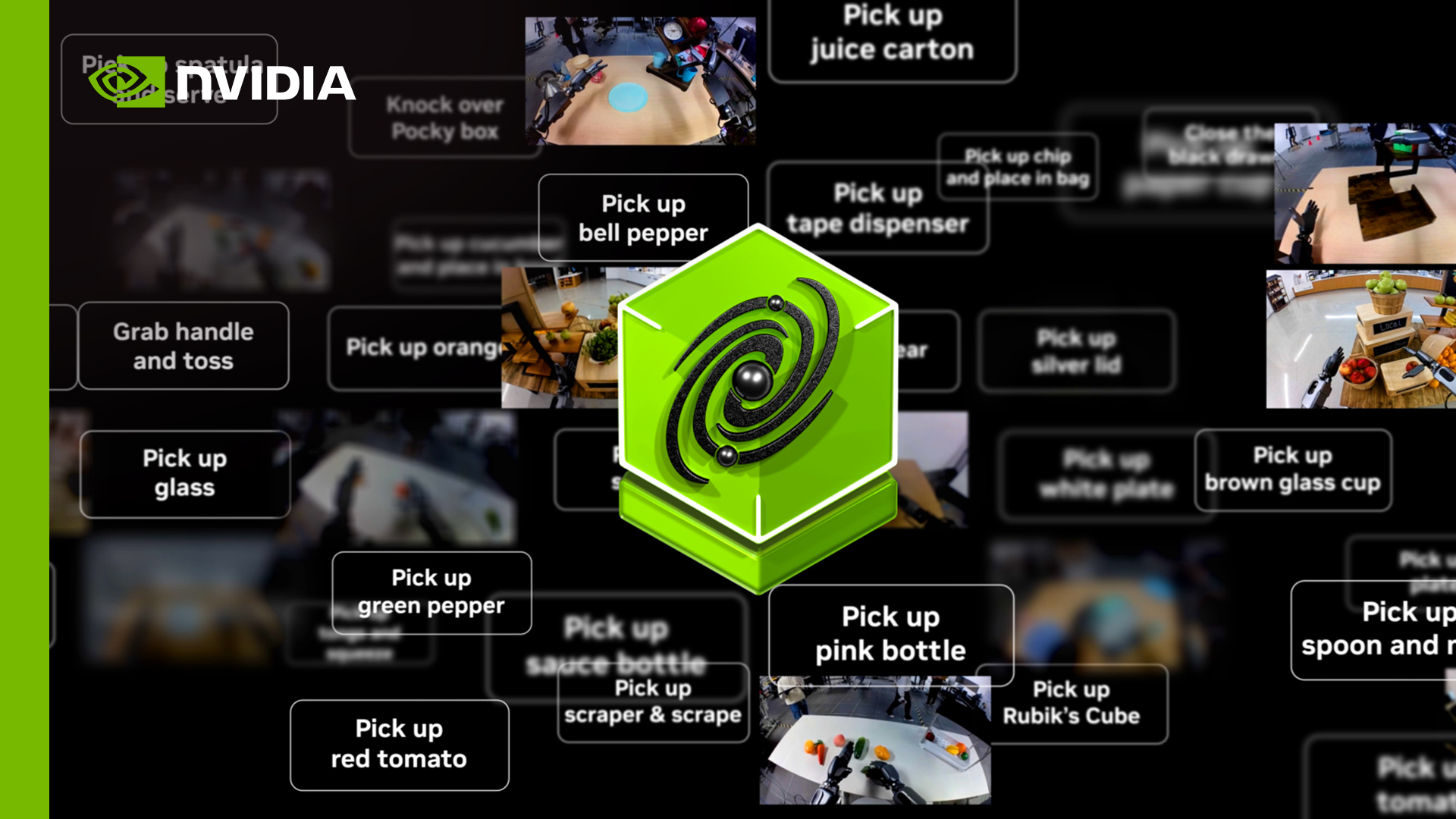

Build Generalist Robot Policies

While preprogrammed robots can be useful for specific, repetitive tasks, they have one key drawback. They operate using fixed instructions within set environments, which limits their adaptability to unexpected changes.

AI-driven robots overcome these limitations through simulation-based learning, letting them autonomously perceive, plan, and act in dynamic conditions. They can acquire and refine new skills by using learned policies—sets of behaviors for navigation, manipulation, and more—to improve their decision-making across various situations before being deployed into the real world.

Quick Links

Why Simulation-Based Robot Learning?

Flexibility and Scalability

Use a sim‑first approach to train hundreds or thousands of robot instances in parallel, combining real robot data and synthetic data across AMRs, arms, and humanoid robots.

Accelerated Skill Development

Train robots in physically accurate simulation environments, helping them adapt to new task variations and reducing the sim-to-real gap.

Safe Proving Environment

Test potentially hazardous scenarios without risking human safety or damaging equipment

Reduced Costs

Avoid the burden of real-world data collection and labeling costs by generating large amounts of synthetic data, validating trained robot policies in simulation, and deploying on robots faster.

Robot Learning Algorithms

Robot learning algorithms can help robots generalize learned skills and improve their performance in changing or novel environments. There are several learning techniques, including:

- Imitation learning: The robot can learn from demonstrations of tasks by humans or other robots, as well as from video or sensor data of agents performing the desired behavior.

- Reinforcement learning: This trial-and-error approach gives the robot a reward or a penalty based on its actions.

- Supervised learning: The robot can be trained using labeled data to learn specific tasks.

- Self-supervised learning: When there are limited labeled datasets, robots can generate their own training labels from unlabeled data to extract meaningful information.

Technical Implementation

Teach Robots to Learn and Adapt

A typical end-to-end robot workflow involves data processing, model training, validation in simulation, and deployment on a real robot.

Data Processing: To bridge data gaps, use a diverse set of high-quality data that combines internet-scale data, synthetic data, and real robot data. Developers can curate, augment, and evaluate synthetic data at scale using Physical AI Blueprint, which can be used for fine-tuning and policy evaluation in Isaac Lab-Arena.

Training and Validating in Simulation: Robots need to be trained and deployed for task-defined scenarios and require accurate virtual representations of real-world conditions. The NVIDIA Isaac™ Lab, an open-source framework for robot learning, can help train robot policies by using reinforcement learning and imitation learning techniques in a modular approach.

Isaac Lab is natively integrated with NVIDIA Isaac Sim™ — an open reference robotic simulation application built on NVIDIA Omniverse™ libraries — using GPU-accelerated NVIDIA PhysX® physics and RTX™ rendering for high-fidelity validation. This unified framework lets you rapidly prototype policies in lightweight simulation environments before deploying to production systems.

Built on Isaac Lab, Isaac Lab-Arena is an open-source framework for scalable policy evaluation in simulation that gives you streamlined APIs to simplify task curation and diversification.

Deploying Onto the Real Robot: The trained robot policies and AI models can be deployed on NVIDIA Jetson™, on-robot computers that deliver the necessary performance and functional safety for autonomous operation.

Imitation Learning and Reinforcement Learning for Robots

Imitation Learning

While imitation learning lets humanoid robots develop new skills by replicating expert demonstrations, collecting real-world datasets is often expensive and labor-intensive.

To overcome this challenge, developers can use the NVIDIA Isaac GR00T-Mimic and GR00T-Dreams blueprints, built on NVIDIA Cosmos™, to produce large, diverse synthetic motion datasets for training.

These datasets can then be used to train the Isaac GR00T N open foundation models within Isaac Lab, enabling generalized humanoid reasoning and robust skill acquisition.

Reinforcement Learning

Use Isaac Lab to conduct high-fidelity physics simulations, perform reward calculations, and enable perception-driven reinforcement learning (RL) within modular, customizable environments.

Start by configuring a wide variety of robots in varying environments, defining RL tasks, and training models using GPU-optimized libraries such as RSL RL, RL-Games, SKRL, and Stable Baselines3—all supported natively by Isaac Lab.

Isaac Lab offers flexible task workflows—either direct or manager-based—so you have control over the complexity and automation of your training jobs.

You can also use Newton—an open-source, GPU-accelerated physics engine, built on NVIDIA Warp, for high-speed, physically accurate, differentiable simulation.

Additionally, NVIDIA OSMO—a cloud-native orchestration platform—enables efficient scaling and management of complex, multi-stage, and multi-container robotics workloads across multi-GPU and multi-node systems. This can significantly accelerate the development and evaluation of robot learning policies.

Quick Links

Partner Ecosystem

Ecosystem

FAQs

Traditional robots are usually controlled with fixed scripts, where engineers hand-code step-by-step instructions and rules. For example, “move to this exact position, then close the gripper if this sensor value is above a threshold.” Robot learning in simulation instead trains AI policies that map sensor inputs (like camera images and joint states) to actions. This lets robots autonomously perceive, plan, and act in virtual environments before deployment and adapt to variations that weren’t explicitly programmed.

Simulation-based learning applies to many embodiments, including autonomous mobile robots, autonomous vehicles, robotic arms, and humanoid robots. Typical tasks include navigation, locomotion, object manipulation, and coordinated workflows in factories, warehouses, hospitals, and retail spaces.

Isaac Sim provides high-fidelity physics and sensor simulation, while open-sourced Isaac Lab scales it into thousands of parallel GPU-accelerated environments. Together, they generate massive amounts of synthetic data to fine-tune the Isaac GR00T N open vision-language-action models. This lets developers teach robots tasks and robot-specific skills in simulation before real-world deployment.

A common workflow starts with processing diverse data from real robots, synthetic data, and internet-scale sources. Policies are then trained and validated in Isaac Lab and Isaac Sim, and finally deployed on on-robot compute such as NVIDIA Jetson for real-world operation.

Training and validating policies in physically accurate simulation environments lets teams test long-tail of edge cases, hazardous scenarios, and rare events safely. This reduces the need for extensive on-robot experimentation, lowers hardware wear and risk, and helps policies transfer more reliably to real environments.

Quick Links

RTX PRO Server—the Best Platform for Industrial and Physical AI

NVIDIA RTX PRO Server accelerates every industrial digitalization, robot simulation, and synthetic data generation workload.