Get started today with this GPU Ready Apps Guide.

SPECFEM3D Cartesian simulates acoustic (fluid), elastic (solid), coupled acoustic/elastic, poroelastic or seismic wave propagation in any type of conforming mesh of hexahedra (structured or not.) It can, for instance, model seismic waves propagating in sedimentary basins or any other regional geological model following earthquakes. It can also be used for non-destructive testing or for ocean acoustics.

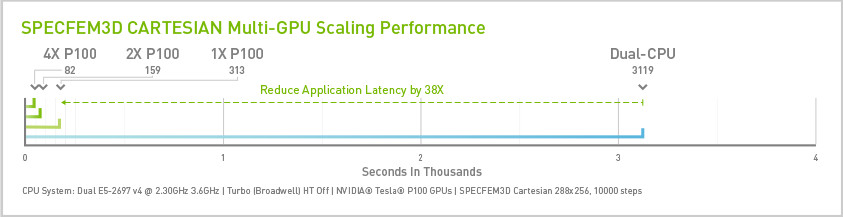

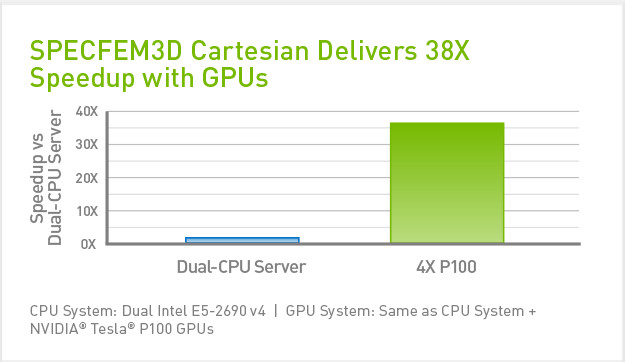

SPECFEM3D Cartesian delivers up to 38x or more speedup on an NVIDIA® Tesla® P100 node compared to a CPU-only system, enabling users to run simulations in hours instead of weeks.

Installation

System Requirements

SPECFEM3D_Cartesian should be portable to any parallel platform with a modern C and Fortran compiler and a recent MPI implementation.

Optionally git can be used to obtain the source code.

Download and Installation Instructions

1. Download the SPECFEM3D_Cartesian source code. The most recent version can be obtained using

git clone --recursive --branch devel

2. Make sure that nvcc, gcc and gfortran are available on your system and in your path. If they are not available please contact the system administrator. In addition to build with MPI mpicc, mpif90 must be in your path.

Note also that the performance of the CPU only runs and also the database generation step is sensitive to the compiler chosen. PGI compiler is much faster than gfortran for example for the database generation step and a little faster for CPU only case for the xspecfem3d simulation. It does not have significant effect on the perf of the xspecfem3d simulation for the GPU cases.

3. Configure the package

./configure --with-cuda=cuda8

Note this will build an executable for devices of compute capability 6.0 or higher.

5. Build the Program

make

Running Jobs

GPU_MODE = .true.

is set in Par_data or the program will not run on GPUs.

Running jobs is then a three stage process. Here we take the example of running on 4 tasks. Note that all 3 stages should be run with the same number of MPI tasks. For GPU runs, you will use 1 MPI task per GPU. For CPU only runs you will typically use 1 MPI task per core. The Par_file and Mesh_Par_file need to be modified for each different case.

1. Run the in-house mesher

mpirun -np 4 ./bin/xmeshfem3D

2. Generate the databases

mpirun -np 4 ./bin/xgenerate_databases

3. Run the simulation

mpirun -np 4 ./bin/xspecfem3D

Benchmarks

This section walks through the process of testing the performance on your system and shows performance on a typical configuration. The example described here is based on the “simple model” example found in “specfem3d/EXAMPLES/meshfem3D_examples/simple_model”.

tar -xzvf SPECFEM3D_Cartesian_GPU_READY_FILES_v2.tgz

3. Copy the input_cartesian_v4.tar to your root specfem3d installation folder

Expected Performance Results

This section provides expected performance benchmarks for different across single and multi-node systems.

Recommended System Configurations

Hardware Configuration

PC

Parameter

Specs

CPU Architecture

x86

System Memory

16-32GB

CPUs

2 (10+ cores, 2 GHz)

GPU Model

GeForce

® GTX TITAN X

GPUs

1-4

Servers

Parameter

Specs

CPU Architecture

x86

System Memory

64GB

CPUs/Node

2 (10+ cores, , 2+ GHz)

Total # of Nodes

1

GPU Model

NVIDIA

®Tesla

® P100,

Tesla ® K80

Tesla ® K80

GPUs/Node

1-4

Software Configuration

Software stack

Parameter

Version

OS

CentOS 7.3

GPU Driver

375.20 or newer

CUDA Toolkit

8.0 or newer

PGI Compliers

16.9