AI is ushering in a new era of global innovation. From powering human ingenuity to counter the spread of infectious diseases, to building smart cities and revolutionizing analytics for all industries, AI is providing teams with the super-human power needed to do their life’s work.

Artificial Intelligence

What Is AI?

In its most fundamental form, AI is the capability of a computer program or a machine to think and learn and take actions without being explicitly encoded with commands. AI can be thought of as the development of computer systems that can perform tasks autonomously, ingesting and analyzing enormous volumes of data, then recognizing patterns in that data. The large and growing AI field of study is always oriented around developing systems that perform tasks that would otherwise require human intelligence to complete—only at speeds beyond any individual’s or group’s capabilities. For this reason, AI is broadly seen as both disruptive and highly transformational.

A key benefit of AI systems is the ability to actually learn from experiences or learn patterns from data, adjusting on its own when new inputs and data are fed into these systems. This self-learning allows AI systems to accomplish a stunning variety of tasks, including image recognition; natural language speech recognition; language translation; crop yield predictions; medical diagnostics; navigation; loan risk analysis; error-prone boring human tasks; and hundreds of other use cases.

AI Growth Powered By GPU Advances

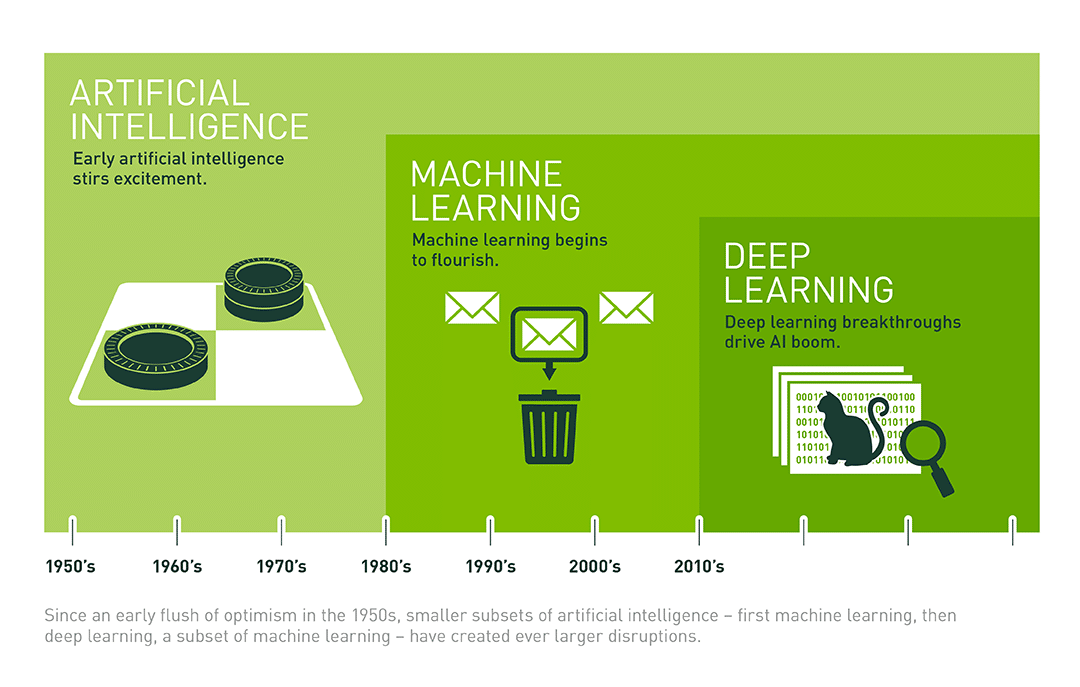

Though the theory and early practice of AI go back three-quarters of a century, it wasn’t until the 21st century that practical AI business applications blossomed. This was the result of a combination of huge advances in computing power and the enormous amounts of data available. AI systems combine vast quantities of data with ultra-fast iterative processing hardware and highly intelligent algorithms that allow the computer to ‘learn’ from data patterns or data features.

The ideal hardware for the heavy work of AI systems are graphical processing units, or GPUs. These specialized, superfast processors make parallel processing very fast and powerful. And massive amounts of data—essentially the fuel for AI engines— comes from a wide variety of sources, such as the Internet of Things (IoT); social media; historical databases; operational data sources; various public and governmental sources; the global science and academic communities; even genomic sources. Combining GPUs with enormous data stores and almost infinite storage capabilities, AI is positioned to make an enormous impact on the business world.

Among the many and growing technologies propelling AI to broad usage are application programming interfaces, or APIs. These are essentially highly portable bundles of code that allow developers and data scientists to integrate AI functionality to current products and services, expanding the value of existing investments. For example, APIs can add Q&A capabilities that describe data or call out interesting insights and patterns.

AI Challenges

It isn’t an overstatement to say that artificial intelligence, or AI, offers the capability to transform the productivity potential of the entire global economy. A study by PwC found that AI’s contribution to the global economy will total nearly $17 trillion within ten years. To participate in this AI-inspired economy, organizations need to overcome AI challenges.

Acquiring raw computing power.

The processing power needed to build AI systems and leverage techniques like machine learning and image processing or language understanding is enormous. NVIDIA is the choice of AI development teams around the world seeking to infuse AI into existing products and services as they build out new and exciting ‘native AI’ services for GPUs and AI SDKs.

Dealing with data bias.

As with any other computer system, AI systems are only as good as the data fed into them. Bad data can come from business, government, or other sources and contain racial, gender, or other biases. Developers and data scientists must take extra precautions to prevent bias in AI data or risk the trust people have in what AI systems actually learn.

AI Use Cases

Healthcare

The world’s leading organizations are equipping their doctors and scientists with AI, helping them transform lives and the future of research. With AI, they can tackle interoperable data, meet the increasing demand for personalized medicine and next-generation clinics, develop intelligent applications unique to their workflows, and accelerate areas like image analysis and life science research. Uses cases include:

- Pathology. Each year, major hospitals take millions of medical scans and tissue biopsies, which are often scanned to create digital pathology datasets. Today, doctors and researchers use AI to comprehensively and efficiently analyze these datasets to classify a myriad of diseases and reduced mistakes when different pathologists disagree on a diagnosis.

- Patient care. The challenge today, as always, is for clinicians to get the right treatments to patients as quickly and efficiently as possible. This is more of an acute need in intensive care units. There, doctors using AI tools can leverage hourly vital sign measurements to predict eight hours in advance whether patients will need treatments to help them breathe, blood transfusions, or interventions to boost cardiac functions. .

Retail

An Accenture report estimates that AI has the potential to create $2.2 trillion worth of value for retailers by 2035 by boosting growth and profitability. As it undergoes a massive digital transformation, the industry can increase business value by using AI to improve asset protection, deliver in-store analytics, and streamline operations.

- Demand prediction. With over 100,000 different products in its 4,700 U.S. stores, the Walmart Labs data science team must predict demand for 500 million items-by-store combinations every week. By performing forecasting with the NVIDIA RAPIDS™ suite of open-source data science and machine learning libraries built on NVIDIA CUDA-X™ AI and NVIDIA GPUs, the Walmart team is able to engineer machine learning features 100X faster and train algorithms 20X faster.

- AiFi is currently pilot testing NanoStore, their 24/7, autonomous, checkout-free store, with retail giants and universities. NanoStores hold over 500 different products and use image recognition, powered by NVIDIA T4 Tensor Core GPUs, to capture merchandise choices and add those to the customer’s tab.

Telecommunications

AI is opening up new waves of communication in the telecommunications industry. By tapping into the power of GPUs and the 5G network, smart services can be brought to the edge, simplifying deployment and enabling them to reach their full potential.

- 2Hz, Inc., is bringing clarity to live calls with noise-suppression technology powered by NVIDIA T4 and V100 GPUs. 2Hz’s deep learning algorithms scale up to 20X more than CPUs, and by running NVIDIA® TensorRT™ on GPUs, 2Hz meets the 12 millisecond (ms) latency requirement for real-time communications

- 5G will deliver multiple computing capabilities, including gigabit speeds with latencies under 20ms. This has led the Verizon Envrmnt team to deploy powerful NVIDIA GPUs to beef up Verizon’s high-performance computing operations and create a distributed data center. 5G will also enable devices to become thinner, lighter, and more battery efficient, opening the door to memory-intensive parallel processing that can power rendering, deep learning, and computer vision.

Financial Services

AI solutions have found a welcoming home in the dynamic world of financial services, with scores of established and startup vendors rushing these solutions to market. The most popular applications to date include:

- Portfolio management and optimization. Historically, calculating portfolio risk has been a largely manual and therefore extremely time-consuming process. Using AI, banks can undertake highly complex queries in seconds without having to move sensitive data.

- Risk management. Like portfolio management, risk management calculations are often done in batch overnight, resulting in lost opportunities that occur 24/7. AI tools can calculate risk using available data virtually in real-time, resulting in increased portfolio performance and improved customer experience.

- Fraud detection. With the ability to ingest tidal volumes of data and search instantly for anomalies, AI solutions can then flag suspect patterns and trigger specific actions.

Industrial

One of the most common AI use cases is the crunching of enormous data streams from various IoT devices for predictive maintenance. This can pertain to the monitoring of the condition of a single piece of equipment, such as an electrical generator, or of an entire manufacturing facility like a factory floor. AI systems harness data not only gathered and transmitted from the devices, but also from various external sources, such as weather logs. Major railways use AI to predict failures, applying the fixes before failure occurs—thereby keeping the trains running on time. AI predictive maintenance on factory floors has been shown to reduce production line downtimes dramatically.

- Download our Ebook, “Implementing AI Solutions For Every Industry” to see customer use cases in your industry.

AI as a tool

Data scientists think of AI as a tool and as a procedure that rests on top of other procedures or methodologies used for deep analysis of data. In addition to languages like R and Python, data scientists work with data from conventional databases, often extracting data using SQL queries. Using certain AI tools, they can quickly undertake tasks to classify and perform predictions on these more conventional data sources.

Why AI matters to...

Machine Learning (ML) Researchers

Most of the researchers are working on AI, as it can be applied to almost any problem. and the availability of large datasets and huge computation power has helped ML researchers create breakthrough research in various domains and revolutionizing industries such as autonomous vehicles, finance, agriculture, etc.

Software Developers

AI hasn’t advanced to the point where it can write software on its own, though enthusiasts say that day isn’t far off. But various organizations already use AI to help develop and then test software solutions, particularly custom software. In the past two years, software vendors have brought to market an ever-growing number and variety of AI-enabled software development tools. Some of the hottest and best-funded startups are those pioneering AI development tools.

In one particularly exciting application of AI development tools, AI boosted project management by ingesting enormous quantities of data from previous development projects. Then, the tools accurately predicted the various tasks, resources, and schedules that would be needed to manage new projects. This doesn’t mean AI can write software or replace developers, but it is making the time these valuable developers spend creating custom software far more efficient.

Why AI Is Better on an Accelerated Computing Platform

AI models can be very large, especially Deep Neural Networks(DNNs), and require massive computing power. Training these AI models requires highly parallelized tasks because the computations are independent of each other. This makes it a good use case for distributed processing on GPUs. With the recent advancements in GPUs, several Vision and Language AI models can now be trained under a minute.

NVIDIA is supercharging AI computing:A long pedigree in artificial intelligence

NVIDIA invented the GPU in 1999. Then with the creation of the NVIDIA CUDA® programming model and Tesla® GPU platform, NVIDIA brought parallel processing to general-purpose computing. With AI innovation and high-performance computing converging, NVIDIA GPUs powering AI solutions are enabling the world’s largest industries to tap into accelerated computing and bring AI to the edge.

Big breakthroughs with NVIDIA-powered neural networks

Building game-changing AI applications begins with training neural networks. NVIDIA DGX-2™ is the most powerful tool for AI training, using 16 GPUs to deliver 2 petaflops of training performance to data teams. Adding in the extreme IO performance of NVIDIA Mellanox InfiniBand networking, DGX-2 systems quickly scale up to supercomputer-class NVIDIA SuperPODs. DGX-2 set world records on MLPerf, a new set of industry benchmarks designed to test deep learning. NVIDIA DGX™ A100 is the most powerful system for all AI workloads, offering high performance compute density, performance, and flexibility in the world’s first 5 petaFLOPS AI system. Adding the extreme IO performance of Mellanox InfiniBand networking, DGX-A100 systems can quickly scale up to supercomputer-class NVIDIA POD.

Boosting AI in the Cloud

Trained AI applications are deployed in large-scale and highly complex cloud data centers serving up voice, video, image, and other services to billions of users. With the rise of conversational AI, the demand is increasing for these systems to work extremely fast to make these services truly useful. NVIDIA TensorRT software and its T4 GPU combine to optimize, validate, and accelerate these demanding networks.

Meanwhile, as AI spills out of the cloud and into the edge where mountains of raw data are generated by industries worldwide, the NVIDIA EGX™ platform puts AI performance closer to the data to drive real-time decisions when and where they’re needed.