Inference

NVIDIA Triton Inference Server

Deploy, run, and scale AI for any application on any platform.

Inference for Every AI Workload

Run inference on trained machine learning or deep learning models from any framework on any processor—GPU, CPU, or other—with NVIDIA Triton Inference Server™. Part of the NVIDIA AI platform and available with NVIDIA AI Enterprise, Triton Inference Server is open-source software that standardizes AI model deployment and execution across every workload.

The Benefits of Triton Inference Server

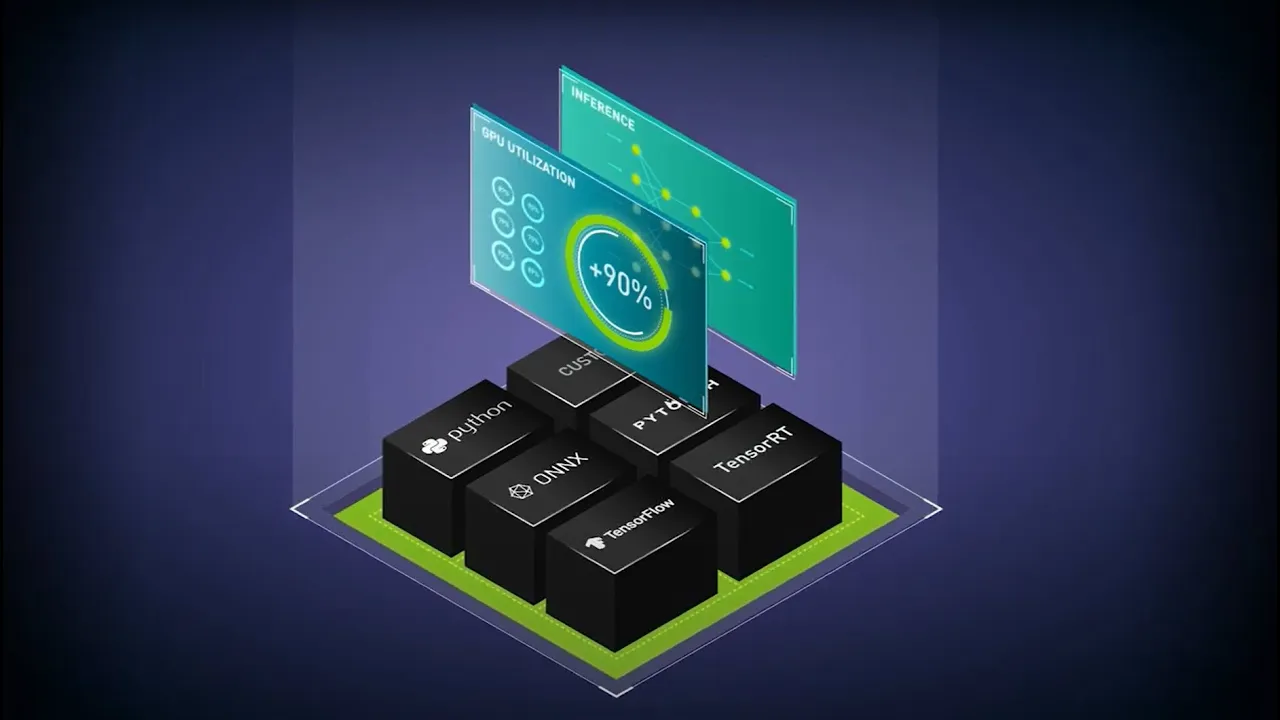

Supports All Training and Inference Frameworks

Deploy AI models on any major framework with Triton Inference Server—including TensorFlow, PyTorch, Python, ONNX, NVIDIA® TensorRT™, RAPIDS™ cuML, XGBoost, scikit-learn RandomForest, OpenVINO, custom C++, and more.

High-Performance Inference on Any Platform

Maximize throughput and utilization with dynamic batching, concurrent execution, optimal configuration, and streaming audio and video. Triton Inference Server supports all NVIDIA GPUs, x86 and Arm CPUs, and AWS Inferentia.

Open Source and Designed for DevOps and MLOps

Integrate Triton Inference Server into DevOps and MLOps solutions such as Kubernetes for scaling and Prometheus for monitoring. It can also be used in all major cloud and on-premises AI and MLOps platforms.

Enterprise-Grade Security, Manageability, and API Stability

NVIDIA AI Enterprise, including NVIDIA Triton Inference Server, is a secure, production-ready AI software platform designed to accelerate time to value with support, security, and API stability.

Explore the Features and Tools of NVIDIA Triton Inference Server

Large Language Model Inference

Triton offers low latency and high throughput for large language model (LLM) inferencing. It supports TensorRT-LLM, an open-source library for defining, optimizing, and executing LLMs for inference in production.

Model Ensembles

Triton Model Ensembles allows you to execute AI workloads with multiple models, pipelines, and pre- and postprocessing steps. It allows execution of different parts of the ensemble on CPU or GPU, and supports multiple frameworks inside the ensemble.

NVIDIA PyTriton

PyTriton lets Python developers bring up Triton with a single line of code and use it to serve models, simple processing functions, or entire inference pipelines to accelerate prototyping and testing.

NVIDIA Triton Model Analyzer

Model Analyzer reduces the time needed to find the optimal model deployment configuration - such as batch size, precision, and concurrent execution instances. It helps select the optimal configuration to meet application latency, throughput, and memory requirements.

See What Our Customers Are Achieving With Triton

Hear From Experts

Watch a series of expert-led talks from our How to Get Started With AI Inference series. These videos give you the chance to explore—at your own pace—a full-stack approach to AI inference and how to optimize the AI inference workflow to lower cloud expenses and boost user adoption.

Get Started With Triton

Purchase NVIDIA AI Enterprise With Triton for Production Deployment

Purchase NVIDIA AI Enterprise, which includes Triton Inference Server for production inference.

Download Containers and Code for Development

Triton Inference Server containers are available on NVIDIA NGC™ and as open-source code on GitHub.

Find More Resources

Stay up to date on the latest AI inference news from NVIDIA.