The NVIDIA Quantum InfiniBand Platform

Bring end-to-end high-performance networking to scientific computing, AI, and cloud data centers.

Introduction

NVIDIA Quantum InfiniBand Networking Solutions

Complex workloads demand ultra-fast processing of high-resolution simulations, extreme-size datasets, and highly parallelized algorithms. As these needs continue to grow, NVIDIA Quantum InfiniBand—the world’s only fully offloadable, In-Network Computing platform—provides dramatic leaps in performance to achieve faster time to discovery with less cost and complexity.

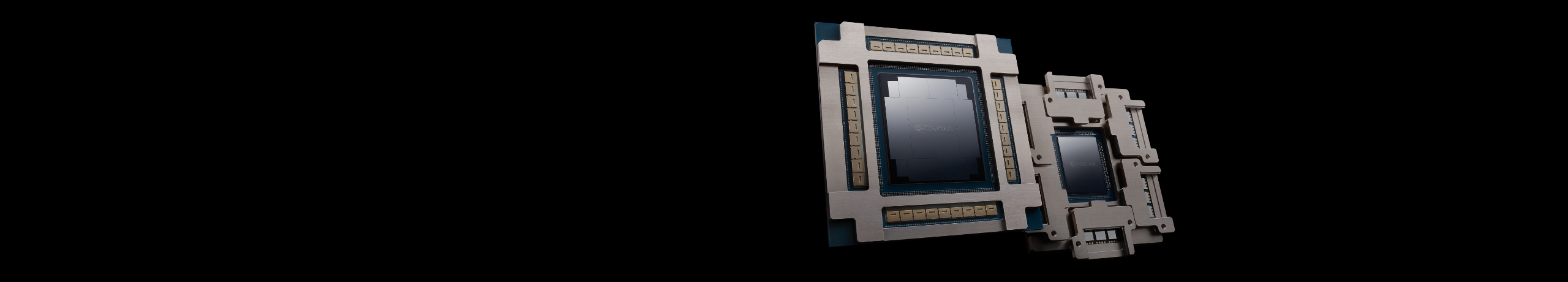

New Co-Packaged Silicon Photonic Networking Switches to Scale to Millions of GPUs, Multi-Site AI Factories

Products

The NVIDIA Quantum InfiniBand Platform

InfiniBand Adapters

As part of the NVIDIA Quantum InfiniBand Networking Platform, NVIDIA® ConnectX® InfiniBand host channel adapters (HCAs) provide ultra-low latency, extreme throughput, and innovative NVIDIA In-Network Computing engines to deliver the acceleration, scalability, and feature-rich technology needed for today's modern workloads.

Data Processing Units (DPUs)

The NVIDIA BlueField® DPUs combine powerful computing, high-speed networking, and extensive programmability to deliver software-defined, hardware-accelerated solutions for the most demanding workloads. From accelerated AI and scientific computing to cloud-native supercomputing, BlueField redefines what’s possible.

InfiniBand Switches

NVIDIA Quantum InfiniBand switch systems deliver the highest performance and port density available. Innovative capabilities such as NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol (SHARP)™ and advanced management features such as self-healing network capabilities, quality of service, enhanced virtual lane mapping, and NVIDIA In-Network Computing acceleration engines provide a performance boost for industrial, AI, and scientific applications.

Routers and Gateway Systems

NVIDIA Quantum InfiniBand systems provide the highest scalability and subnet isolation using InfiniBand routers and InfiniBand-to-Ethernet gateway systems. The latter is used to enable a scalable and efficient way to connect InfiniBand data centers to Ethernet infrastructures.

Long-Haul Systems

NVIDIA MetroX® long-haul systems can seamlessly connect remote NVIDIA Quantum InfiniBand data centers, storage, and other InfiniBand platforms. They can extend the reach of InfiniBand up to 40 kilometers, enabling native InfiniBand connectivity between remote data centers or between data center and remote storage infrastructures for high availability and disaster recovery.

Cables and Transceivers

LinkX® cables and transceivers are designed to maximize the performance of HPC networks, requiring high-bandwidth, low-latency, highly reliable connections between InfiniBand elements.

Capabilities

How InfiniBand Enhances the Network

Software

The InfiniBand Software Stack

Resources

The Latest in InfiniBand

Next Steps

Ready to Get Started?

Configure Your Cluster

Use this online tool to configure clusters based on fat tree with two levels of switch systems and Dragonfly+ topologies.

Take Networking Courses

Explore deep technical training topics on NVIDIA Quantum InfiniBand networking through the NVIDIA Academy.

Ready to Purchase?

Visit the NVIDIA Marketplace to discover more information on how to purchase NVIDIA networking solutions.