GeForce RTX 40 Series Community Q&A: You Asked, We Answered

Following our GeForce Beyond Announcements, we hosted a community Q&A on r/NVIDIA and invited seven NVIDIA Product Managers to answer your questions. While we could not answer all questions, we found the most common ones and our experts responded.

GeForce RTX 40 Series

Q: What makes the GeForce RTX 4080 16 GB and 12 GB graphics cards keep the same “4080” name if they have completely different amounts of CUDA cores and are different chips?

The GeForce RTX 4080 16GB and 12GB naming is similar to the naming of two versions of RTX 3080 that we had last generation, and others before that. There is an RTX 4080 configuration with a 16GB frame buffer, and a different configuration with a 12GB frame buffer. One product name, two configurations.

The 4080 12GB is an incredible GPU, with performance exceeding our previous generation flagship, the RTX 3090 Ti and 3x the performance of RTX 3080 Ti with support for DLSS 3, so we believe it’s a great 80-class GPU. We know many gamers may want a premium option so the RTX 4080 16GB comes with more memory and even more performance. The two versions will be clearly identified on packaging, product details, and retail so gamers and creators can easily choose the best GPU for themselves.

Q: How does the RTX 40 Series perform compared to the 30 series if DLSS frame generation isn't turned on?

You can find more performance information here.

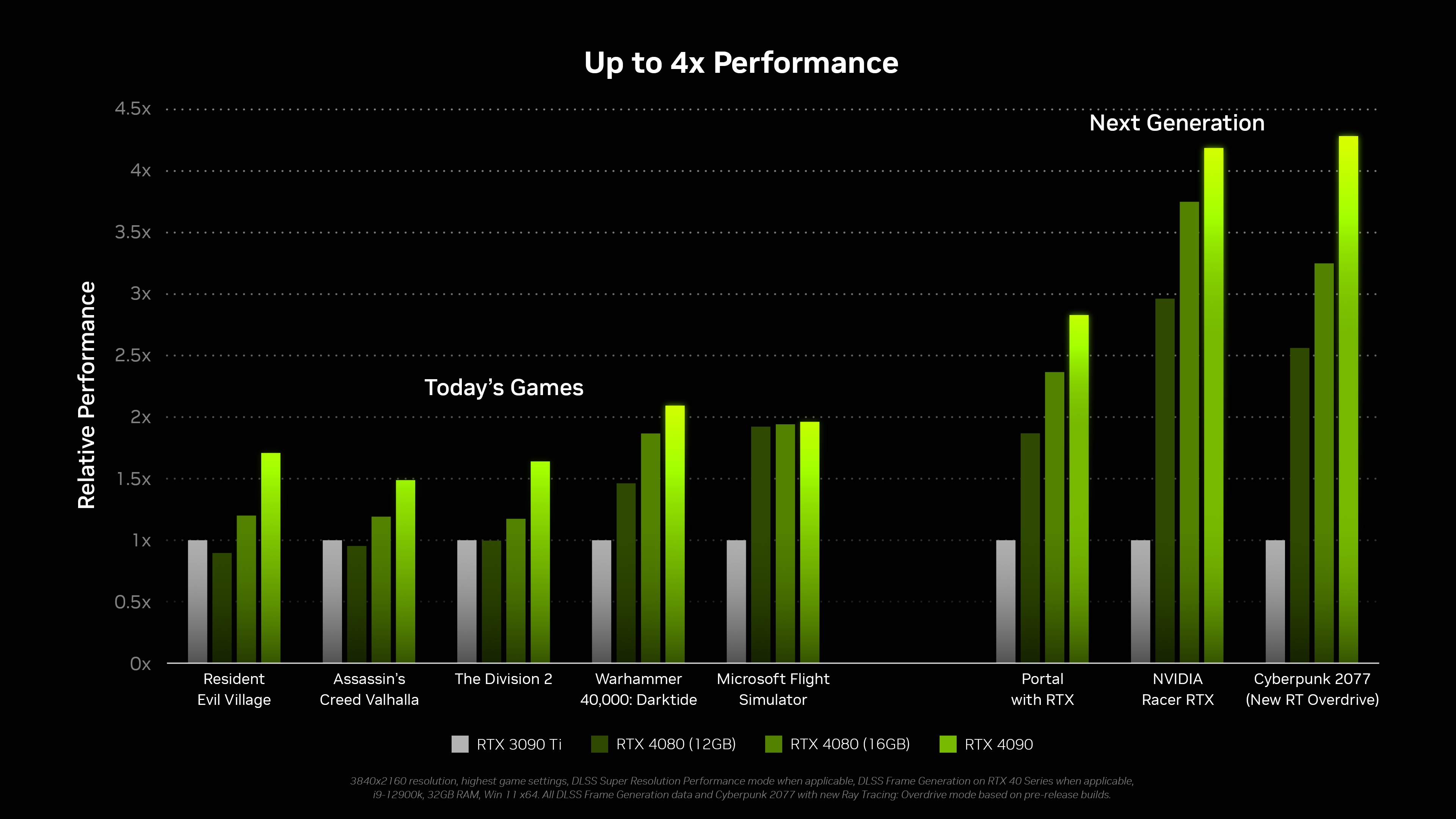

This chart has DLSS turned on when supported, but there are some games like Division 2 and Assassin's Creed Valhalla in the chart that don’t have DLSS, so you can see performance compared to our fastest RTX 30 Series GPU without DLSS.

Q: There's been a great deal of content on the visual and gaming stuff, but I'm more interested in the CUDA capabilities. The number of cores is down against the corresponding RTX 30xx models, but the supported capabilities are upgraded. How much faster/better/more efficient are the CUDA cores on the RTX 40xx in existing workflows?

CUDA application workflows can be more diverse than gaming. In general, the significant core clock increase means that the shader horsepower is greatly increased generation on generation, ranging from 30% to 120%. CUDA applications that have more challenging memory access patterns can also benefit from the larger L2 cache. For creator workflows, the GeForce RTX 40 Series is up to 2x faster in offline rendering apps - like Blender or V-Ray, and paired with DLSS 3 it’s up to 4x faster in real-time rendering apps - like Omniverse, Unreal, or Unity. This is all while drawing equal or less power than the previous generation.

Q: What kind of power adapters are required for GeForce RTX 4090 & RTX 4080 (16GB) & RTX 4080 (12GB)?

The RTX 4090 uses the new PCIe Gen5 power connector which allows you to power the graphics card with a single cable. We expect PSUs with this connector to be available in October. However the 4090 will come with a power adapter that allows you to use a power supply with your existing 8-pin PCIe connectors.

The RTX 4080 also uses a PCIe Gen5 power connector and also ships with a power adapter that supports PCIe 8-pin connectors.

Please note, the existing 12 pin cables and adapters from the RTX 30 Series generation are not compatible with RTX 40 Series graphics cards.

Q: Why isn’t DisplayPort 2.0 listed on the spec sheet?

The current DisplayPort 1.4 standard already supports 8K at 60Hz. Support for DisplayPort 2.0 in consumer gaming displays are still a ways away in the future.

Q: Can someone explain these performance claims in how they relate to gaming? 2-4x faster seems unprecedented. Usually from generation to generation GPU's see a 30-50% increase in performance. Is the claim being made that these cards will at *minimum* double the performance for gaming?

The RTX 4090 achieves up to 2-4x performance through a combination of software and hardware enhancements. We’ve upgraded all three RTX processors - Shader Cores, RT Cores and Tensor Cores. Combined with our new DLSS 3 AI frame generation technology the RTX 4090 delivers up to 2x performance in the latest games and creative applications vs. RTX 3090 Ti. When looking at next generation content that puts a higher workload on the GPU we see up to 4x performance gains. These are not minimum performance gains - they are the gains you can expect to see on the more computationally intensive games and applications.

You can find more performance information here.

NVIDIA DLSS 3

Q: Will DLSS 2.X continue to be improved and supported in future titles?

DLSS 3 consists of 3 technologies – DLSS Frame Generation, DLSS Super Resolution (a.k.a. DLSS 2), and NVIDIA Reflex.

DLSS Frame Generation uses RTX 40 Series high-speed Optical Flow Accelerator to calculate the motion flow that is used for the AI network, then executes the network on 4th Generation Tensor Cores. Support for previous GPU architectures would require further innovation and optimization for the optical flow algorithm and AI model.

DLSS Super Resolution and NVIDIA Reflex will of course remain supported on prior generation hardware, so current GeForce gamers and creators will benefit from games integrating DLSS 3. We continue to research and train the AI for DLSS Super Resolution and will provide model updates for all RTX customers as we have been doing since DLSS’s initial release.

DLSS 3 Sub-Feature |

GPU Hardware Support |

DLSS Frame Generation |

GeForce RTX 40 Series GPU |

DLSS Super Resolution (a.k.a. DLSS 2) |

GeForce RTX 20/30/40 Series GPU |

NVIDIA Reflex |

GeForce 900 Series and newer GPU |

Q: DLSS 3.0 looks great and is really impressive on a technical level. Do the improvements in DLSS 3.0 vs 2.0 require engine level updates? Or can DLSS 3.0 be implemented easily in games that already support DLSS 2.0 without much development effort?

DLSS 3 is designed for fast and easy integration. It is already becoming one of our most rapidly adopted technologies with over 35 games and apps coming soon. The first games arrive in October.

DLSS 3 leverages the same integration points as DLSS 2 and NVIDIA Reflex, making upgrades from these existing SDKs easy via a DLSS 3 Streamline plugin.

DLSS 3 is also coming to the world’s most popular game engines, including Unity, Unreal Engine, and Frostbite Engine, making it simple for games based on these engines to switch DLSS 3 on.

Q: How does optical flow fit into the model? If DLSS 2 is spatial reconstruction of the next frame, does it mean we have temporal reconstruction multiple frames ahead? Also, does it enable you to get lower than 25% of the pixels rendered (DLSS performance mode)?

There are two AI models in DLSS 3 - DLSS Super Resolution (aka DLSS 2) and DLSS Frame Generation. DLSS Super Resolution boosts frame rate by rendering fewer pixels and then using AI to construct a sharp, higher resolution image. DLSS Frame Generation analyzes sequential frames and motion data from the new Optical Flow Accelerator in GeForce RTX 40 Series GPUs to create additional high quality frames, boosting performance while maintaining great image quality and responsiveness. When DLSS 3 is enabled, the first frame is reconstructed by DLSS Super Resolution, and the following frame by DLSS Frame Generation. In total, DLSS 3 enables you to reconstruct 7/8s of the total displayed pixels. Learn more here.

NVIDIA RTX Remix & Portal with RTX

Q: Portal with RTX was my favorite part of the event today. Huge fan of RTX implementations in older games. Can we expect other similar projects in the future?

We are glad you like Portal with RTX! NVIDIA RTX Remix is the modding platform used to develop Portal with RTX and will be available as a free toolkit for the community to remaster similar games - or to continue to build on Portal with RTX. We’re excited to see what the community will come up with! We don’t have anything further to announce on NVIDIA Lightspeed Studio projects at this time.

Q: Is RTX Remix locked to 40xx cards?

No. Performance aside, RTX Mods created with RTX Remix (including Portal with RTX) are expected to run on GPUs that support Vulkan Ray Tracing. The RTX Remix creator toolkit will be supported on 8GB+ RTX GPUs.

That said, RTX 40 Series cards with DLSS 3 will deliver the best performance for RTX Remix and RTX Mods. Minimum and recommended GPUs will be provided closer to RTX Remix beta release.

Q: In the Elder Scrolls III: Morrowind RTX Remix showcase, what work is being done by AI and what is being done by artists?

We’ve seen some confusion on this topic so let me clear it up. RTX Remix includes AI-texture tools for upscaling textures by 4X and converting them to be physically based materials. Whenever we show a scene simply processed by AI Texture Tools, we label them as “AI Enhanced”. In our view, AI is helpful at getting a modder started on their remaster. But to achieve an RTX On quality remaster, we encourage modders to hand build the more important assets to achieve their artistic vision.

With both Portal with RTX and The Elder Scrolls III: Morrowind, NVIDIA’s team used Omniverse’s ecosystem of connected creator applications like Adobe Substance, Autodesk Maya and Blender to further enhance most of the assets. In our trailer, we use “RTX On” to signal when we are showing a remastered scene, complete with ray tracing, RT-ready custom assets, and DLSS 3. To see how we added RTX to Morrowind, check out our explainer video.

Q: NVIDIA Remix surely seems wonderful news for the modding space, how broad will be its applicability? Will every game be able to support it, or will it be limited to just some chosen games?

Initially, we plan to ship with support for DirectX 8 and 9 games that utilize a fixed function graphics pipeline. Game compatibility may vary by title – more information will be provided closer to beta release. Definitely let us know which DirectX 8 and 9 games you are excited about modding!

Q: How is it possible to inject a modded scene back into the game? All these new objects, lighting sources. They need to interact with game engine/NPCs/player characters somehow, but how?

The game engine sends commands to the DirectX runtime, and it’s these commands which instruct the GPU to render these NPCs/Player characters correctly. RTX Remix intercepts those commands from the application, before they reach the GPU, and alters them based on the content creators desires, as expressed in the RTX Remix toolkit.

Q: Will RTX Remix allow the integration of DLSS 2 and FSR 2.1, or is the upscaling limited to DLSS 3?

Currently RTX Remix supports DLSS 3 features which includes DLSS Frame Generation, DLSS Super Resolution (aka DLSS 2), and NVIDIA Reflex.

NVIDIA Reflex

Q: What about the raw performance? You did a good job in DLSS and ray tracing, but as a competitive FPS player, I care more about raw performance.

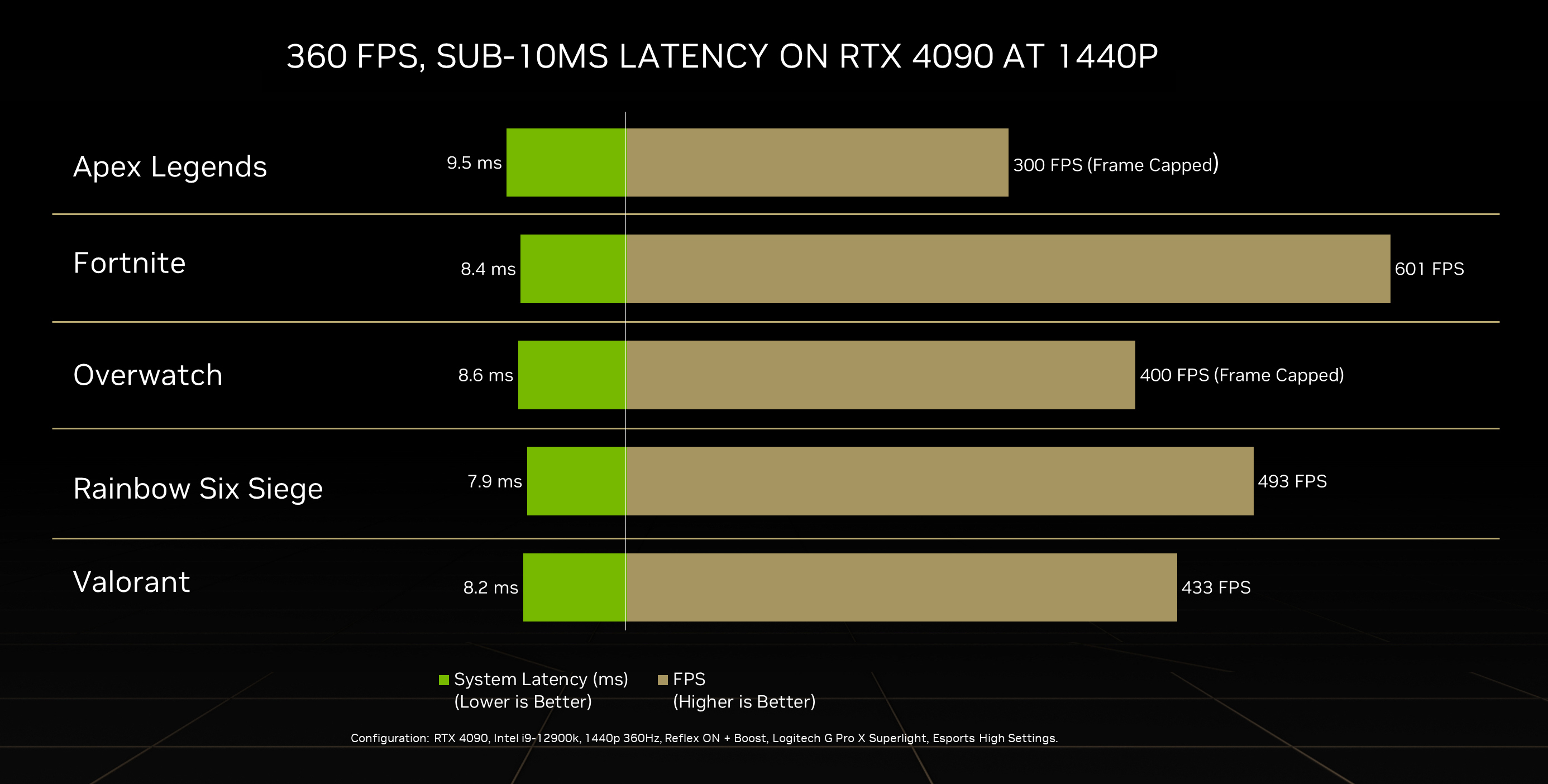

Raw performance received a major uplift this generation as well! RTX 40 Series GPUs enable 1440p competitive gaming at 360+ FPS. I’m playing Valorant consistently over 400 FPS at 1440p. Check out more in our article that was posted today.

Q: A friend of mine some weeks ago was telling me how NVIDIA Reflex is a feature thought for older gpus, is it true? Do older models have the most benefits?

Older GPUs tend to have lower FPS and higher latency, which means there is more latency for NVIDIA Reflex to mitigate.

For example (not real data):

- Old GPU - Reflex OFF 50ms -> Reflex ON 30ms

- New GPU - Reflex OFF 25ms -> Reflex ON 18ms

Both old and new GPUs benefit from NVIDIA Reflex. The % savings will just be higher on the older GPU due to it having higher base latency.

NVIDIA Broadcast, NVIDIA Studio & NVENC

Q: What would be the benefits of using NVIDIA NVENC over the more traditional x264?

x264 is a software encoder that works on CPU, whereas NVENC is a hardware encoder that uses dedicated hardware on NVIDIA GPUs. x264 will utilize part of your CPU, leaving less power to run your games or other apps. NVENC operates on an independent part of the GPU, leaving the CPU and GPU to render the game and apps. Hence, NVENC allows you to maximize the use of your hardware and get more FPS.

On top of that, next gen codecs like AV1 consume a lot of resources and cannot run on a typical CPU. But with NVENC in GeForce RTX 40 Series you can encode AV1 up to 8K60 seamlessly.

Q: Any updates to NVDEC? Still reliant on either M1 or Quicksync for 10 bit 422 decode.

GeForce RTX 40 Series use the same NVIDIA Decoder as RTX 30 Series - 5th generation NVDEC. There is no support for 10-bit 4:2:2 decode.

Q: Will ShadowPlay benefit from the NVENC improvements and the addition of AV1 encoding?

ShadowPlay has been updated to make use of GeForce RTX 40 Series dual encoders, enabling ShadowPlay to record up to 8K60 HDR in HEVC. AV1 is not currently supported in ShadowPlay.

Q: What are the performance increases like for blender, and other 3D suites?

GeForce RTX 40 Series is up to 2x faster gen-to-gen in offline renderers - like Chaos V-Ray or Blender; and paired with DLSS 3 it’s up to 4x faster in real-time renderers - like Omniverse, Unreal Engine, or Unity.

Head to GeForce.com to learn more about GeForce RTX 40 Series graphics cards and everything else we announced at our GeForce Beyond keynote.