A recommendation system (or recommender system) is a class of machine learning that uses data to help predict, narrow down, and find what people are looking for among an exponentially growing number of options.

Recommendation System

What Is a Recommendation System?

A recommendation system is an artificial intelligence or AI algorithm, usually associated with machine learning, that uses Big Data to suggest or recommend additional products to consumers. These can be based on various criteria, including past purchases, search history, demographic information, and other factors. Recommender systems are highly useful as they help users discover products and services they might otherwise have not found on their own.

Recommender systems are trained to understand the preferences, previous decisions, and characteristics of people and products using data gathered about their interactions. These include impressions, clicks, likes, and purchases. Because of their capability to predict consumer interests and desires on a highly personalized level, recommender systems are a favorite with content and product providers. They can drive consumers to just about any product or service that interests them, from books to videos to health classes to clothing.

Types of Recommendation Systems

While there are a vast number of recommender algorithms and techniques, most fall into these broad categories: collaborative filtering, content filtering and context filtering.

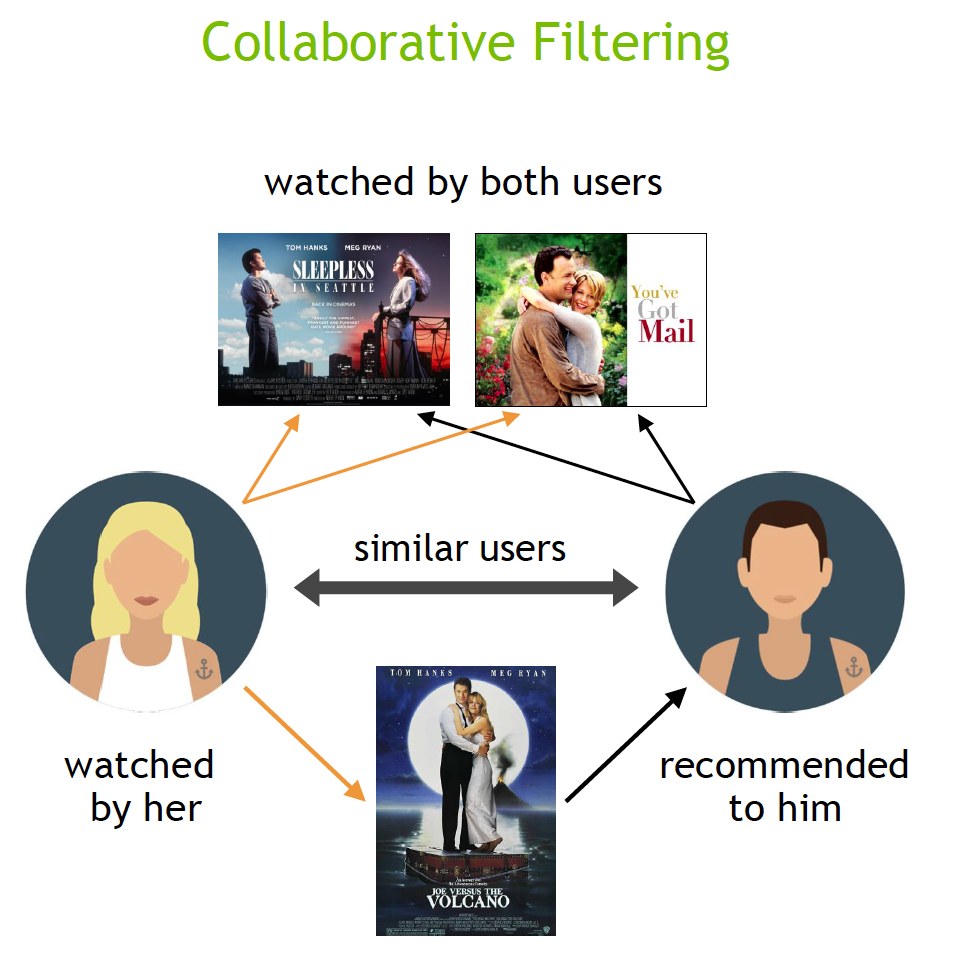

Collaborative filtering algorithms recommend items (this is the filtering part) based on preference information from many users (this is the collaborative part). This approach uses similarity of user preference behavior, given previous interactions between users and items, recommender algorithms learn to predict future interaction. These recommender systems build a model from a user’s past behavior, such as items purchased previously or ratings given to those items and similar decisions by other users. The idea is that if some people have made similar decisions and purchases in the past, like a movie choice, then there is a high probability they will agree on additional future selections. For example, if a collaborative filtering recommender knows you and another user share similar tastes in movies, it might recommend a movie to you that it knows this other user already likes.

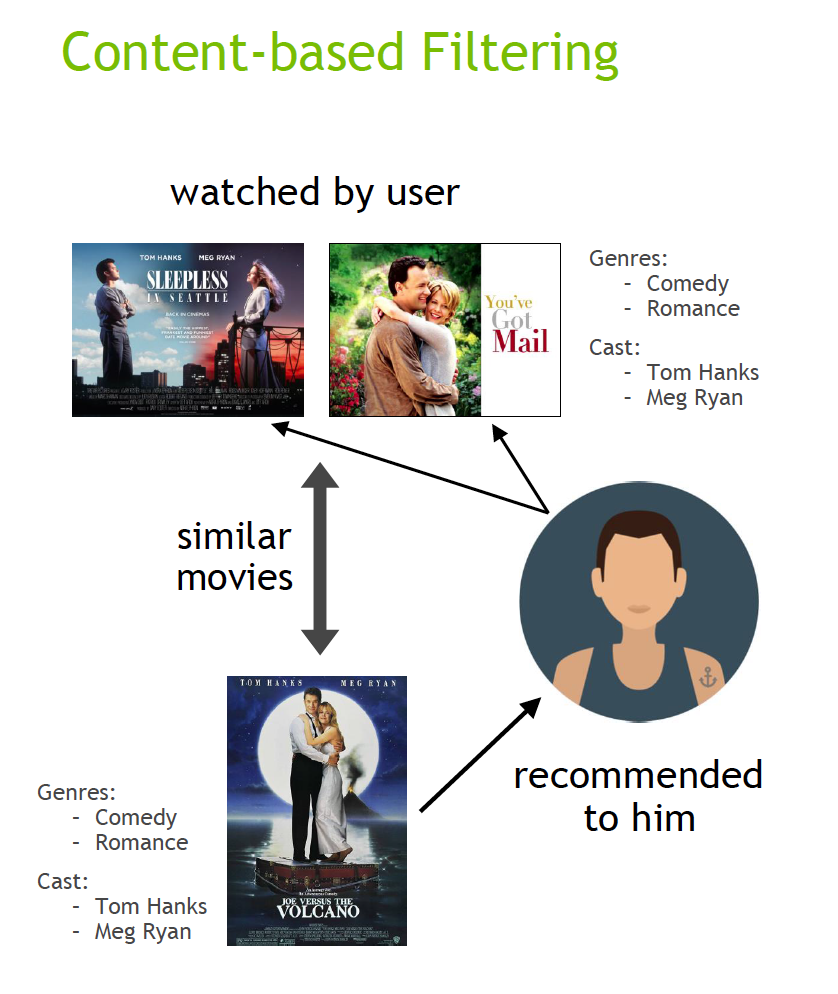

Content filtering, by contrast, uses the attributes or features of an item (this is the content part) to recommend other items similar to the user’s preferences. This approach is based on similarity of item and user features, given information about a user and items they have interacted with (e.g. a user’s age, the category of a restaurant’s cuisine, the average review for a movie), model the likelihood of a new interaction. For example, if a content filtering recommender sees you liked the movies You’ve Got Mail and Sleepless in Seattle, it might recommend another movie to you with the same genres and/or cast such as Joe Versus the Volcano.

Hybrid recommender systems combine the advantages of the types above to create a more comprehensive recommending system.

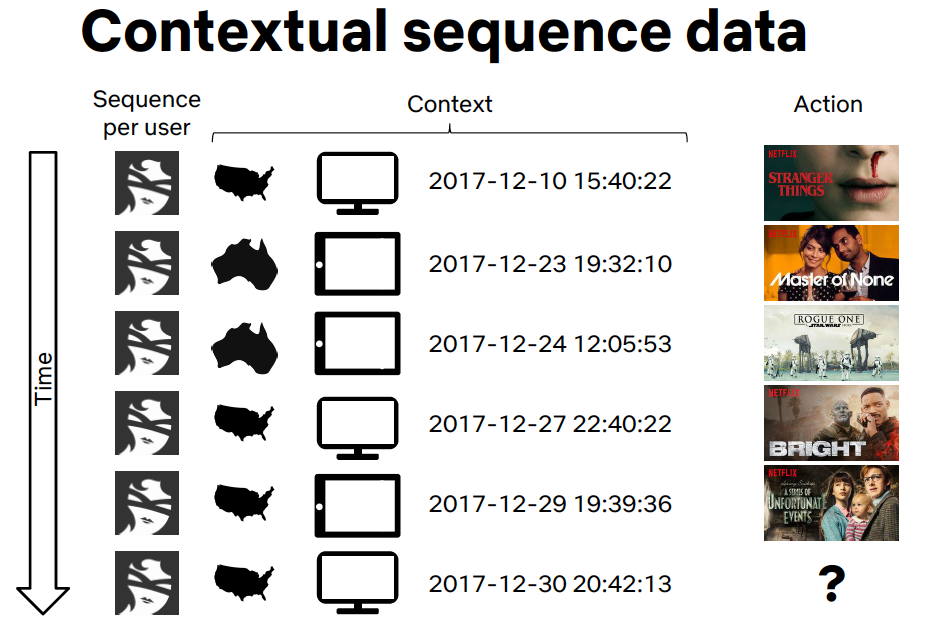

Context filtering includes users’ contextual information in the recommendation process. Netflix spoke at NVIDIA GTC about making better recommendations by framing a recommendation as a contextual sequence prediction. This approach uses a sequence of contextual user actions, plus the current context, to predict the probability of the next action. In the Netflix example, given one sequence for each user—the country, device, date, and time when they watched a movie—they trained a model to predict what to watch next.

Use Cases and Applications

E-Commerce & Retail: Personalized Merchandising

Imagine that a user has already purchased a scarf. Why not offer a matching hat so the look will be complete? This feature is often implemented by means of AI-based algorithms as “Complete the look” or “You might also like” sections in e-commerce platforms like Amazon, Walmart, Target, and many others.

On average, an intelligent recommender system delivers a 22.66% lift in conversions rates for web products.

Media & Entertainment: Personalized Content

AI-based recommender engines can analyze an individual’s purchase behavior and detect patterns that will help provide them with the content suggestions that will most likely match his or her interests. This is what Google and Facebook actively apply when recommending ads, or what Netflix does behind the scenes when recommending movies and TV shows.

Personalized Banking

A mass market product that is consumed digitally by millions, banking is prime for recommendations. Knowing a customer’s detailed financial situation and their past preferences, coupled by data of thousands of similar users, is quite powerful.

Benefits of Recommendation Systems

Recommender systems are a critical component driving personalized user experiences, deeper engagement with customers, and powerful decision support tools in retail, entertainment, healthcare, finance, and other industries. On some of the largest commercial platforms, recommendations account for as much as 30% of the revenue. A 1% improvement in the quality of recommendations can translate into billions of dollars in revenue.

Companies implement recommender systems for a variety of reasons, including:

- Improving retention. By continuously catering to the preferences of users and customers, businesses are more likely to retain them as loyal subscribers or shoppers. When a customer senses that they’re truly understood by a brand and not just having information randomly thrown at them, they’re far more likely to remain loyal and continue shopping at your site.

- Increasing sales. Various research studies show increases in upselling revenue from 10-50% resulting from accurate ‘you might also like’ product recommendations. Sales can be increased with recommendation system strategies as simple as adding matching product recommendations to a purchase confirmation; collecting information from abandoned electronic shopping carts; sharing information on ‘what customers are buying now’; and sharing other buyers’ purchases and comments.

- Helping to form customer habits and trends. Consistently serving up accurate and relevant content can trigger cues that build strong habits and influence usage patterns in customers.

- Speeding up the pace of work. Analysts and researchers can save as much as 80% of their time when served tailored suggestions for resources and other materials necessary for further research.

- Boosting cart value. Companies with tens of thousands of items for sale would be challenged to hard code product suggestions for such an inventory. By using various means of filtering, these ecommerce titans can find just the right time to suggest new products customers are likely to buy, either on their site or through email or other means.

How Recommenders Work

How a recommender model makes recommendations will depend on the type of data you have. If you only have data about which interactions have occurred in the past, you’ll probably be interested in collaborative filtering. If you have data describing the user and items they have interacted with (e.g. a user’s age, the category of a restaurant’s cuisine, the average review for a movie), you can model the likelihood of a new interaction given these properties at the current moment by adding content and context filtering.

Matrix Factorization for Recommendation

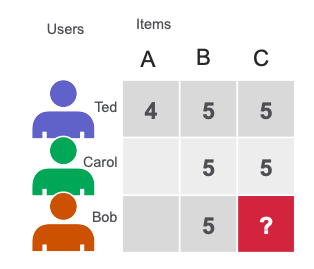

Matrix factorization (MF) techniques are the core of many popular algorithms, including word embedding and topic modeling, and have become a dominant methodology within collaborative-filtering-based recommendation. MF can be used to calculate the similarity in user’s ratings or interactions to provide recommendations. In the simple user item matrix below, Ted and Carol like movies B and C. Bob likes movie B. To recommend a movie to Bob, matrix factorization calculates that users who liked B also liked C, so C is a possible recommendation for Bob.

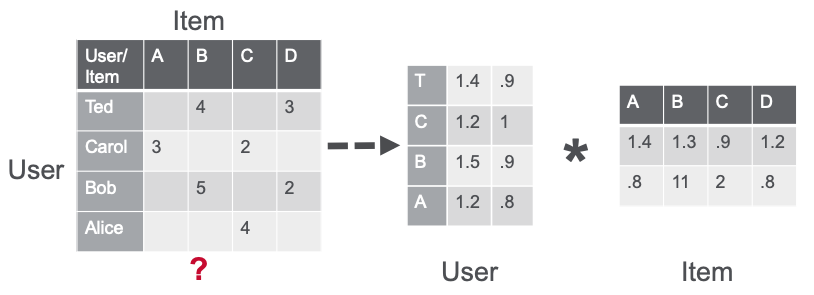

Matrix factorization using the alternating least squares (ALS) algorithm approximates the sparse user item rating matrix u-by-i as the product of two dense matrices, user and item factor matrices of size u × f and f × i (where u is the number of users, i the number of items and f the number of latent features) . The factor matrices represent latent or hidden features which the algorithm tries to discover. One matrix tries to describe the latent or hidden features of each user, and one tries to describe latent properties of each movie. For each user and for each item, the ALS algorithm iteratively learns (f) numeric “factors” that represent the user or item. In each iteration, the algorithm alternatively fixes one factor matrix and optimizes for the other, and this process continues until it converges.

CuMF is an NVIDIA® CUDA®-based matrix factorization library that optimizes the alternate least square (ALS) method to solve very large-scale MF. CuMF uses a set of techniques to maximize the performance on single and multiple GPUs. These techniques include smart access of sparse data leveraging GPU memory hierarchy, using data parallelism in conjunction with model parallelism, to minimize the communication overhead among GPUs, and a novel topology-aware parallel reduction scheme.

Deep Neural Network Models for Recommendation

There are different variations of artificial neural networks (ANNs), such as the following:

- ANNs where information is only fed forward from one layer to the next are called feedforward neural networks. Multilayer perceptrons (MLPs) are a type of feedforward ANN consisting of at least three layers of nodes: an input layer, a hidden layer and an output layer. MLPs are flexible networks that can be applied to a variety of scenarios.

- Convolutional Neural Networks are the image crunchers to identify objects.

- Recurrent neural networks are the mathematical engines to parse language patterns and sequenced data.

Deep learning (DL) recommender models build upon existing techniques such as factorization to model the interactions between variables and embeddings to handle categorical variables. An embedding is a learned vector of numbers representing entity features so that similar entities (users or items) have similar distances in the vector space. For example, a deep learning approach to collaborative filtering learns the user and item embeddings (latent feature vectors) based on user and item interactions with a neural network.

DL techniques also tap into the vast and rapidly growing novel network architectures and optimization algorithms to train on large amounts of data, use the power of deep learning for feature extraction, and build more expressive models.

Current DL–based models for recommender systems: DLRM, Wide and Deep (W&D), Neural Collaborative Filtering (NCF), Variational AutoEncoder (VAE) and BERT (for NLP) form part of the NVIDIA GPU-accelerated DL model portfolio that covers a wide range of network architectures and applications in many different domains beyond recommender systems, including image, text and speech analysis. These models are designed and optimized for training with TensorFlow and PyTorch.

Neural Collaborative Filtering

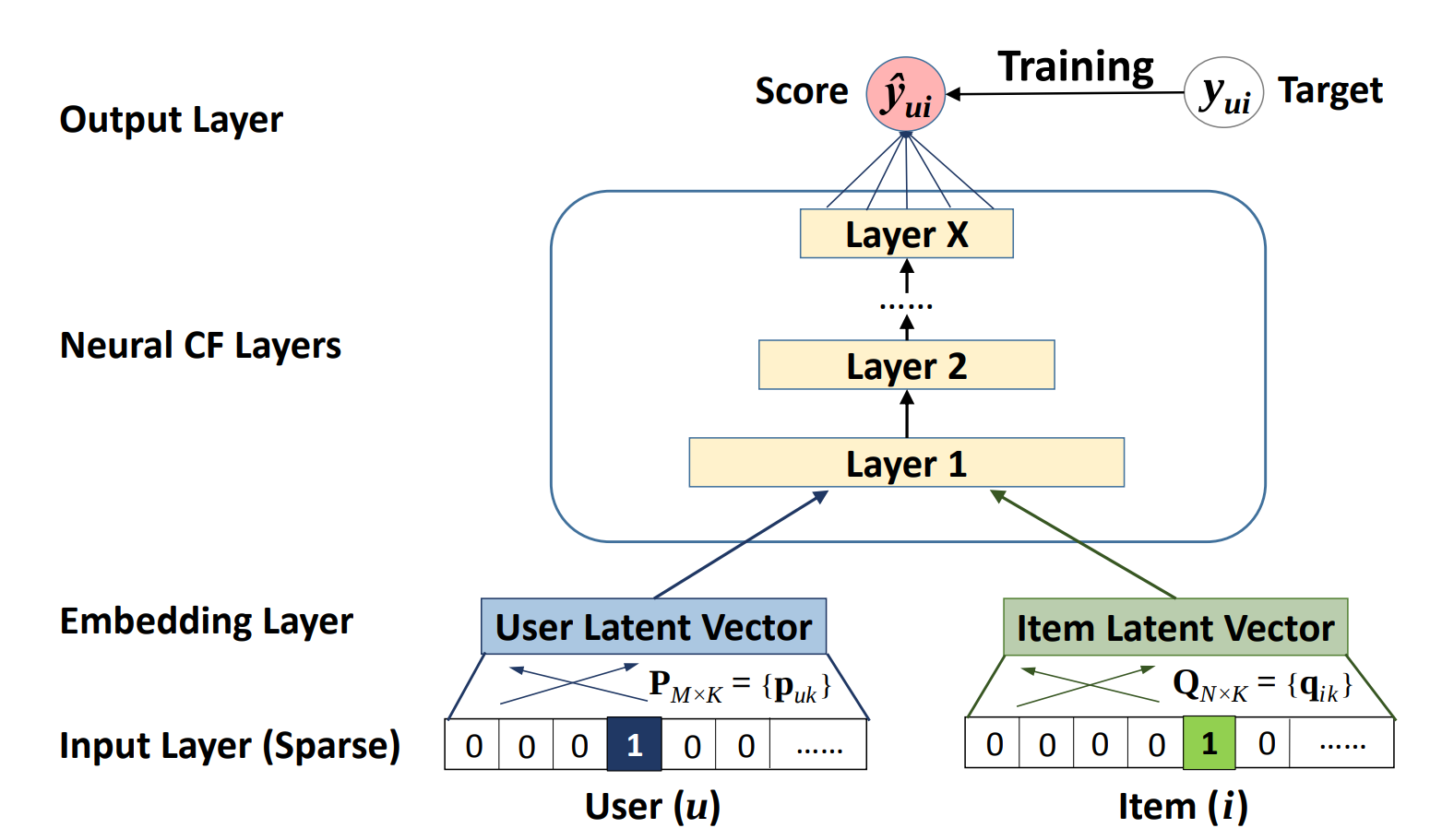

The Neural Collaborative Filtering (NCF) model is a neural network that provides collaborative filtering based on user and item interactions. The model treats matrix factorization from a non-linearity perspective. NCF TensorFlow takes in a sequence of (user ID, item ID) pairs as inputs, then feeds them separately into a matrix factorization step (where the embeddings are multiplied) and into a multilayer perceptron (MLP) network.

The outputs of the matrix factorization and the MLP network are then combined and fed into a single dense layer that predicts whether the input user is likely to interact with the input item.

Variational Autoencoder for Collaborative Filtering

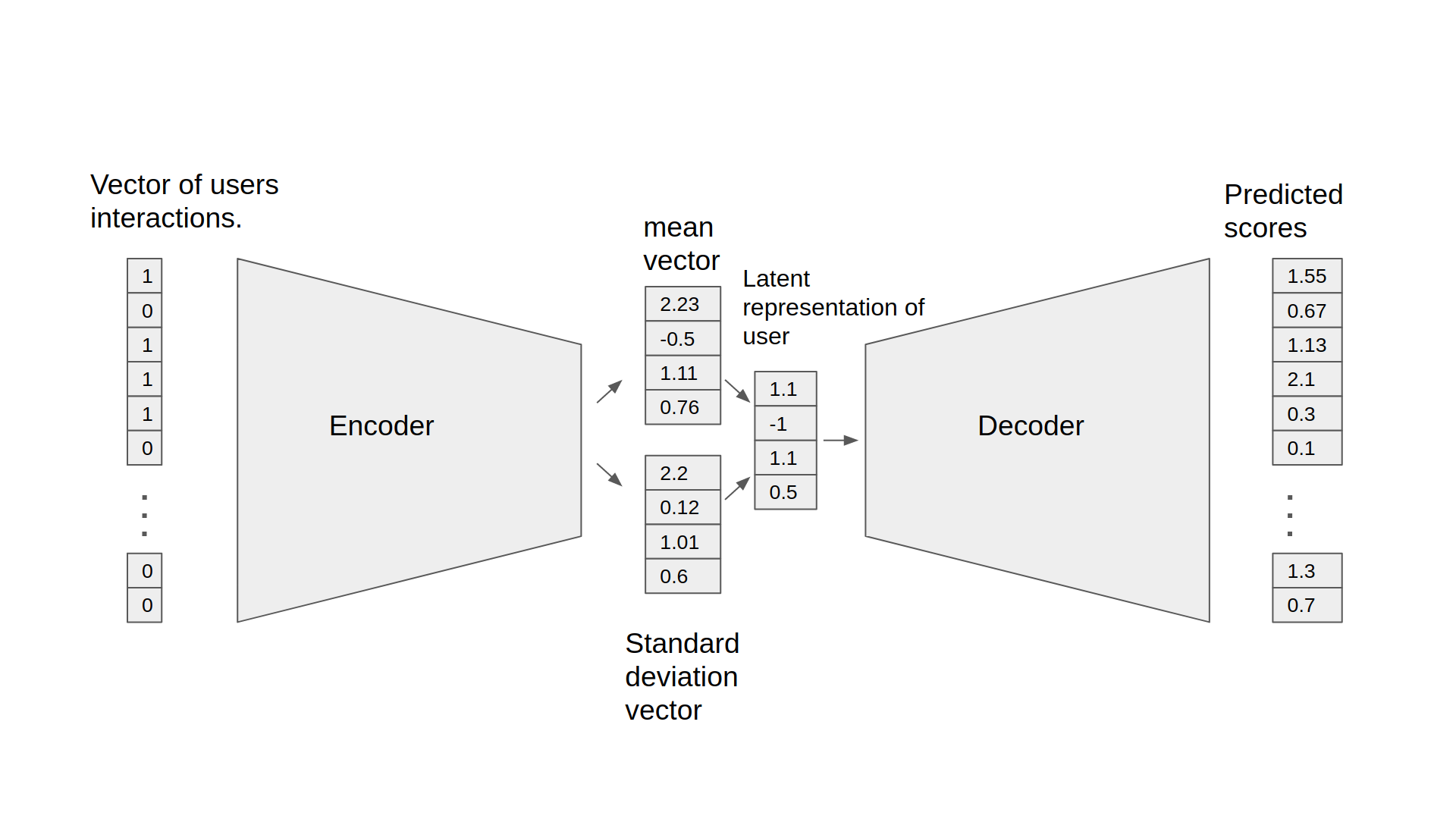

An autoencoder neural network reconstructs the input layer at the output layer by using the representation obtained in the hidden layer. An autoencoder for collaborative filtering learns a non-linear representation of a user-item matrix and reconstructs it by determining missing values.

The NVIDIA GPU-accelerated Variational Autoencoder for Collaborative Filtering (VAE-CF) is an optimized implementation of the architecture first described in Variational Autoencoders for Collaborative Filtering. VAE-CF is a neural network that provides collaborative filtering based on user and item interactions. The training data for this model consists of pairs of user-item IDs for each interaction between a user and an item.

The model consists of two parts: the encoder and the decoder. The encoder is a feedforward, fully connected neural network that transforms the input vector, containing the interactions for a specific user, into an n-dimensional variational distribution. This variational distribution is used to obtain a latent feature representation of a user (or embedding). This latent representation is then fed into the decoder, which is also a feedforward network with a similar structure to the encoder. The result is a vector of item interaction probabilities for a particular user.

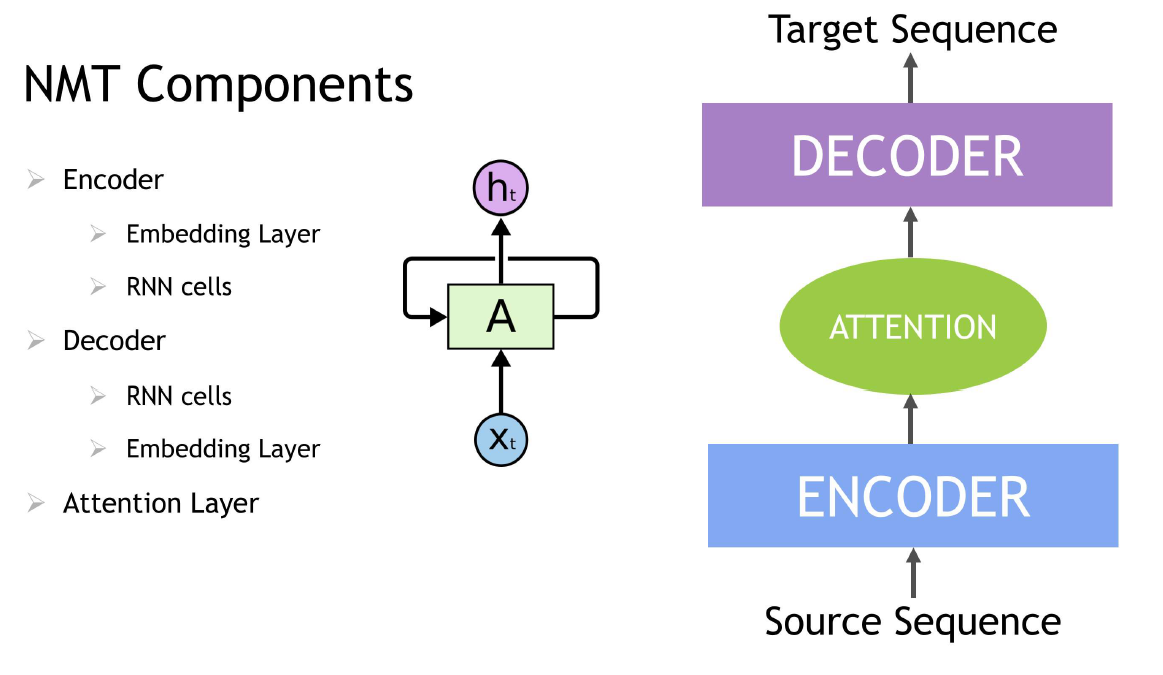

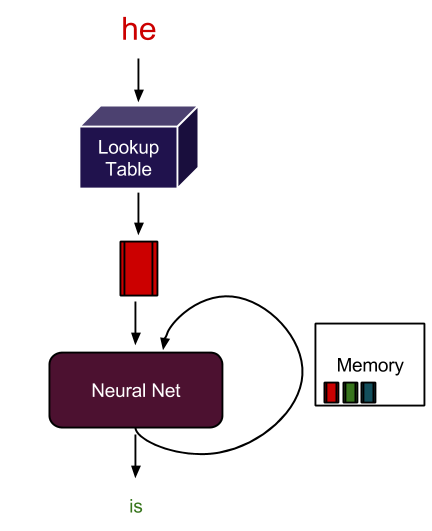

Contextual Sequence Learning

A Recurrent neural network (RNN) is a class of neural network that has memory or feedback loops that allow it to better recognize patterns in data. RNNs solve difficult tasks that deal with context and sequences, such as natural language processing, and are also used for contextual sequence recommendations. What distinguishes sequence learning from other tasks is the need to use models with an active data memory, such as LSTMs (Long Short-Term Memory) or GRU (Gated Recurrent Units) to learn temporal dependence in input data. This memory of past input is crucial for successful sequence learning. Transformer deep learning models, such as BERT (Bidirectional Encoder Representations from Transformers), are an alternative to RNNs that apply an attention technique—parsing a sentence by focusing attention on the most relevant words that come before and after it. Transformer-based deep learning models don’t require sequential data to be processed in order, allowing for much more parallelization and reduced training time on GPUs than RNNs.

In an NLP application, input text is converted into word vectors using techniques, such as word embedding. With word embedding, each word in the sentence is translated into a set of numbers before being fed into RNN variants, Transformer, or BERT to understand context. These numbers change over time while the neural net trains itself, encoding unique properties such as the semantics and contextual information for each word, so that similar words are close to each other in this number space, and dissimilar words are far apart. These DL models provide an appropriate output for a specific language task like next-word prediction and text summarization, which are used to produce an output sequence.

Session context-based recommendations apply the advances in sequence modeling from deep learning and NLP to recommendations. RNN models trained on the sequence of user events in a session (e.g. products viewed, data and time of interactions) learn to predict the next item(s) in a session. User item interactions in a session are embedded similarly to words in a sentence. For example, movies viewed are translated into a set of numbers before being fed into RNN variants such as LSTM, GRU, or Transformer to understand context.

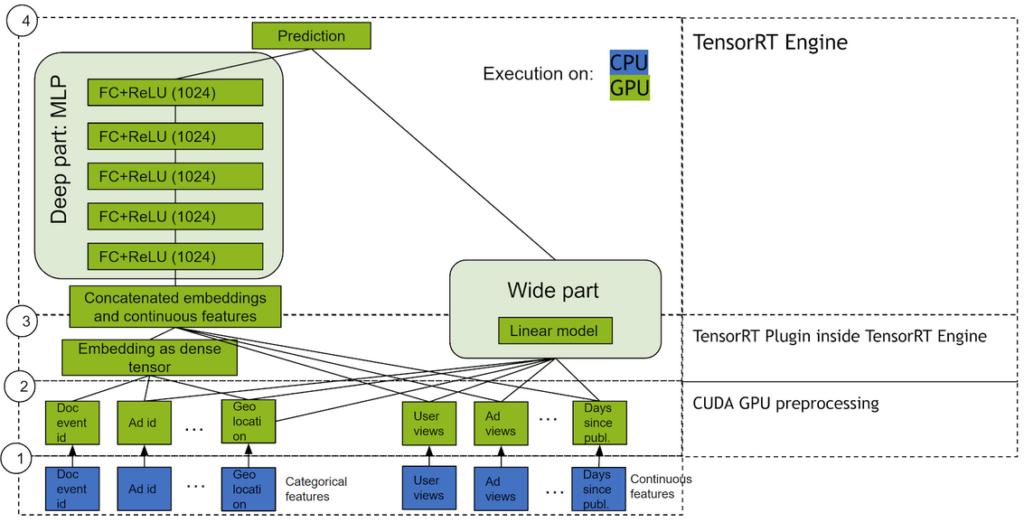

Wide & Deep

Wide & Deep refers to a class of networks that use the output of two parts working in parallel—wide model and deep model—whose outputs are summed to create an interaction probability. The wide model is a generalized linear model of features together with their transforms. The deep model is a Dense Neural Network (DNN), a series of five hidden MLP layers of 1024 neurons, each beginning with a dense embedding of features. Categorical variables are embedded into continuous vector spaces before being fed to the DNN via learned or user-determined embeddings.

What makes this model so successful for recommendation tasks is that it provides two avenues of learning patterns in the data, “deep” and “shallow”. The complex, nonlinear DNN is capable of learning rich representations of relationships in the data and generalizing to similar items via embeddings, but needs to see many examples of these relationships in order to do so well. The linear piece, on the other hand, is capable of “memorizing” simple relationships that may only occur a handful of times in the training set.

In combination, these two representation channels often end up providing more modelling power than either on its own. NVIDIA has worked with many industry partners who reported improvements in offline and online metrics by using Wide & Deep as a replacement for more traditional machine learning models.

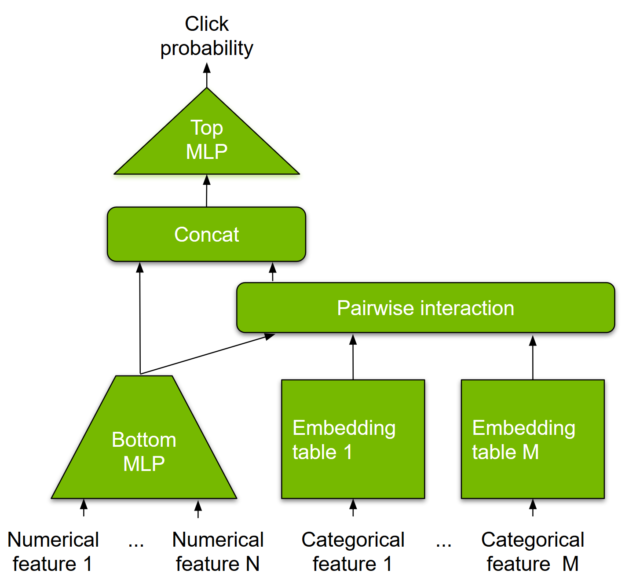

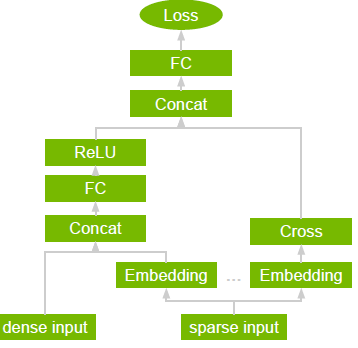

DLRM

DLRM is a DL-based model for recommendations introduced by Facebook research. It’s designed to make use of both categorical and numerical inputs that are usually present in recommender system training data. To handle categorical data, embedding layers map each category to a dense representation before being fed into multilayer perceptrons (MLP). Numerical features can be fed directly into an MLP.

At the next level, second-order interactions of different features are computed explicitly by taking the dot product between all pairs of embedding vectors and processed dense features. Those pairwise interactions are fed into a top-level MLP to compute the likelihood of interaction between a user and item pair.

Compared to other DL-based approaches to recommendation, DLRM differs in two ways. First, it computes the feature interaction explicitly while limiting the order of interaction to pairwise interactions. Second, DLRM treats each embedded feature vector (corresponding to categorical features) as a single unit, whereas other methods (such as Deep and Cross) treat each element in the feature vector as a new unit that should yield different cross terms. These design choices help reduce computational/memory cost while maintaining competitive accuracy.

DLRM forms part of NVIDIA Merlin, a framework for building high-performance, DL-based recommender systems, which we discuss below.

Why Recommendation Systems Run Better with GPUs

Recommender systems are capable of driving engagement on the most popular consumer platforms. And as the scale of data gets really big (tens of millions to billions of examples), DL techniques are showing advantages over traditional methods. Consequently, the combination of more sophisticated models and rapid data growth has raised the bar for computational resources.

The mathematical operations underlying many machine learning algorithms are often matrix multiplications. These types of operations are highly parallelizable and can be greatly accelerated using a GPU.

A GPU is composed of hundreds of cores that can handle thousands of threads in parallel. Because neural nets are created from large numbers of identical neurons they are highly parallel by nature. This parallelism maps naturally to GPUs, which can deliver a 10X higher performance than CPU-only platforms. GPUs have become the platform of choice for training large, complex neural network-based systems for this reason, and the parallel nature of inference operations also lend themselves well for execution on GPUs.

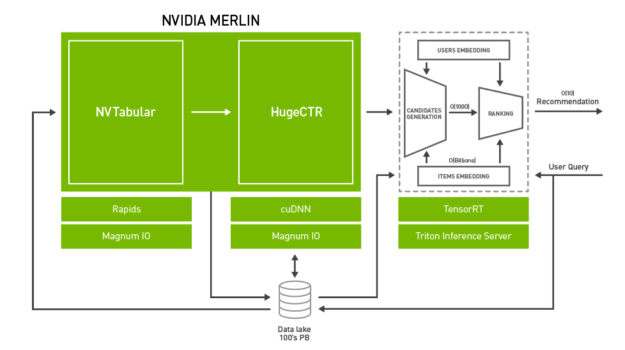

Why the NVIDIA Merlin Recommender System Application Framework?

There are multiple challenges when it comes to performance of large-scale recommender systems solutions, including huge datasets, complex data preprocessing and feature engineering pipelines, and extensive repeated experimentation. To meet the computational demands for large-scale DL recommender systems training and inference, recommender-on-GPU solutions provide fast feature engineering and high training throughput (to enable both fast experimentation and production retraining). They also deliver low latency, high-throughput inference.

NVIDIA Merlin is an open-source application framework and ecosystem created to facilitate all phases of recommender system development, from experimentation to production, accelerated on NVIDIA GPUs.

The framework provides fast feature engineering and preprocessing for operators common to recommendation datasets and high training throughput of several canonical deep learning-based recommender models. These include Wide & Deep, Deep Cross Networks, DeepFM, and DLRM, to enable fast experimentation and production retraining. For production deployment, Merlin also provides low-latency, high-throughput inference. These components combine to provide an end-to-end framework for training and deploying deep learning recommender system models on the GPU that’s both easy to use and highly performant.

Merlin also includes tools for building deep learning-based recommendation systems that provide better predictions than traditional methods. Each stage of the pipeline is optimized to support hundreds of terabytes of data, all accessible through easy-to-use APIs.

NVTabular reduces data preparation time by GPU-accelerating feature transformations and preprocessing.

HugeCTR is a GPU-accelerated deep neural network training framework designed to distribute training across multiple GPUs and nodes. It supports model-parallel embedding tables and data-parallel neural networks and their variants, such as Wide and Deep Learning (WDL), Deep Cross Network (DCN), DeepFM, and Deep Learning Recommendation Model (DLRM).

NVIDIA Triton™ Inference Server and NVIDIA® TensorRT™ accelerate production inference on GPUs for feature transforms and neural network execution.

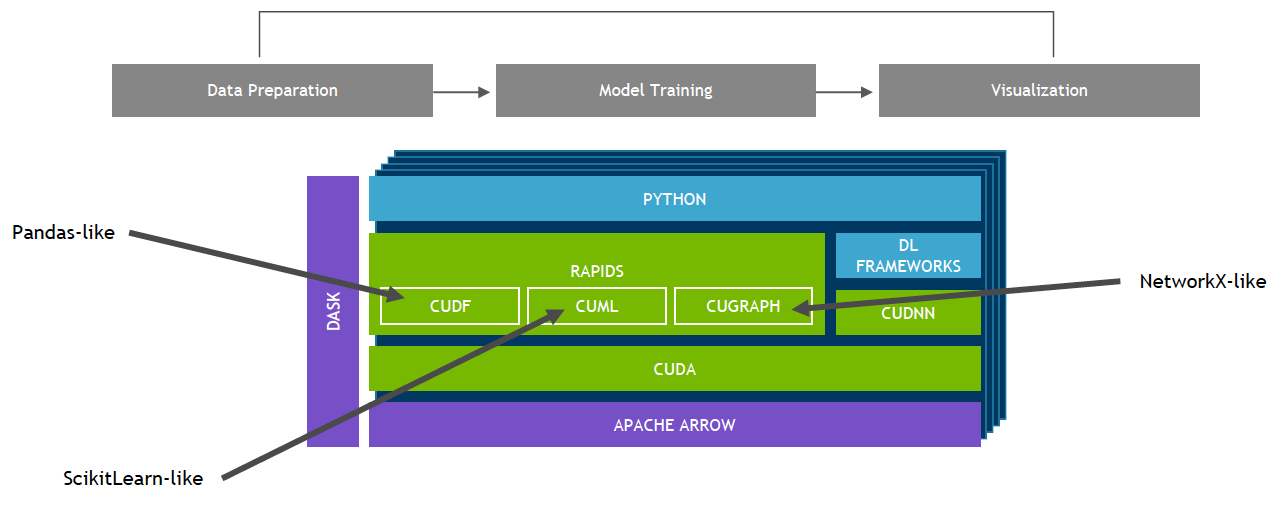

NVIDIA GPU-Accelerated End-to-End Data Science and DL

NVIDIA Merlin is built on top of NVIDIA RAPIDS™. The RAPIDS™ suite of open-source software libraries, built on CUDA, gives you the ability to execute end-to-end data science and analytics pipelines entirely on GPUs, while still using familiar interfaces like Pandas and Scikit-Learn APIs.

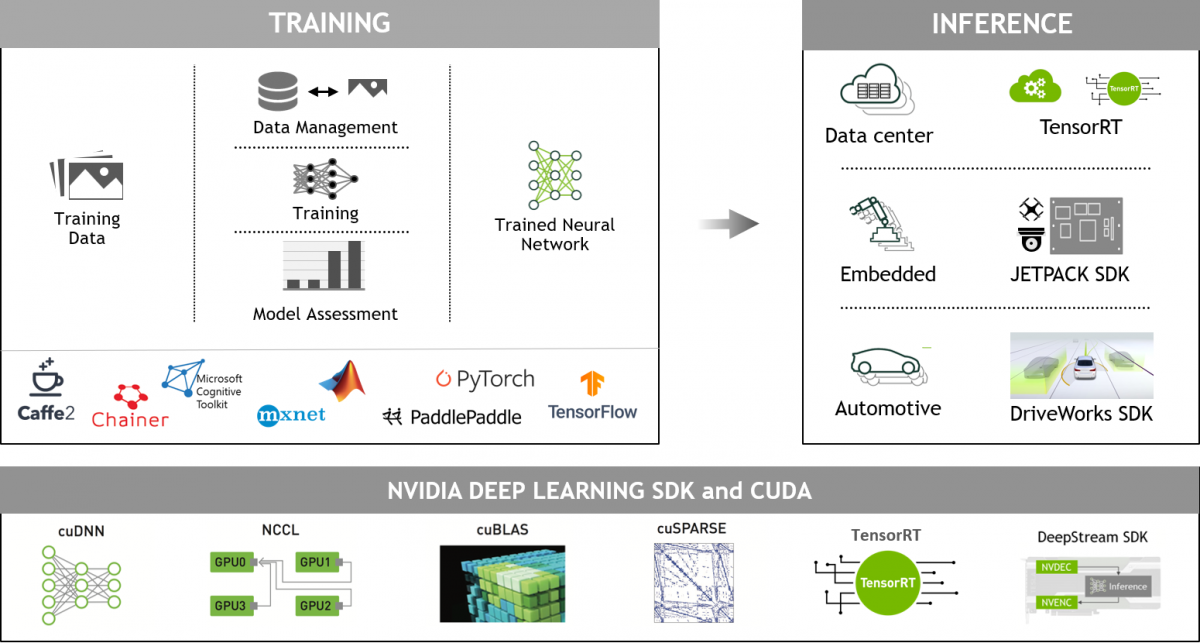

NVIDIA GPU-Accelerated Deep Learning Frameworks

GPU-accelerated deep learning frameworks offer the flexibility to design and train custom deep neural networks and provide interfaces to commonly used programming languages such as Python and C/C++. Widely used deep learning frameworks such as MXNet, PyTorch, TensorFlow and others rely on NVIDIA GPU-accelerated libraries to deliver high-performance, multi-GPU-accelerated training.

Next Steps

- For more details on the NVIDIA Deep Learning Examples model architectures, example code, and how to set to end-to-end data processing, training, and inference pipeline on a GPU, please refer to the DLRM developer blog and NVIDIA GPU-accelerated DL model portfolio under /PyTorch/Recommendation/DLRM. In addition, DLRM forms part of NVIDIA Merlin.

- Blog: What's a Recommender System?

- Introduction to NVIDIA Merlin (Blog)

- Introduction to HugeCTR (Recorded Talk)

- Accelerated Wide and Deep Pipeline (Blog)

- Building Recommender Systems Faster Using Jupyter Notebooks from NGC (Blog)

- Recurrent Neural Network

- Accelerating ETL for Recommender Systems on NVIDIA GPUs with NVTabular

- Optimizing the Deep Learning Recommendation Model on NVIDIA GPUs

- Building Intelligent Recommender Systems (Deep Learning Workshop)

- Accelerating Wide & Deep Recommender Inference on GPUs

- https://www.nvidia.com/en-us/on-demand/session/gtcfall20-a21350/

- Achieving High-Quality Search and Recommendation Results with DeepNLP