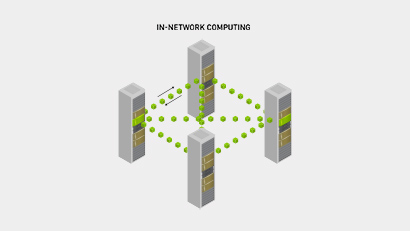

Designed for today’s business continuity and simplified disaster recovery design, MetroX-2 enables aggregate data and storage networking over a single, consolidated fabric. As a cost-effective, power-efficient, and scalable long haul solution, NVIDIA MetroX-2 guarantees high-performance, high-volume data-sharing between remote InfiniBand sites, easily managed as a single unified network fabric.

NVIDIA Mellanox MetroX-2

Bring together remote resources with expanded InfiniBand interconnectivity