TensorFlow is a leading open-source library designed for developing and deploying state-of-the-art machine learning applications.

TensorFlow

What Is Tensorflow

Heavily used by data scientists, software developers, and educators, TensorFlow is an open-source platform for machine learning using data flow graphs. Nodes in the graph represent mathematical operations, while the graph edges represent the multidimensional data arrays (tensors) that flow between them. This flexible architecture allows machine learning algorithms to be described as a graph of connected operations. They can be trained and executed on GPUs, CPUs, and TPUs across various platforms without rewriting code, ranging from portable devices to desktops to high-end servers. This means programmers of all backgrounds can use the same toolsets to collaborate, significantly boosting their efficiency. Developed initially by the Google Brain Team for the purposes of conducting machine learning and deep neural networks (DNNs) research, the system is general enough to be applicable in a wide variety of other domains as well.

How TensorFlow Works

There are three distinct parts that define the TensorFlow workflow, namely preprocessing of data, building the model, and training the model to make predictions. The framework inputs data as a multidimensional array called tensors and executes in two different fashions. The primary method is by building a computational graph that defines a dataflow for training the model. The second, and often more intuitive method, is using eager execution, which follows imperative programming principles and evaluates operations immediately.

Using the TensorFlow architecture, training is generally done on a desktop or in a data center. In both cases, the process is sped up by placing tensors on the GPU. Trained models can then run on a range of platforms, from desktop to mobile and all the way to cloud.

TensorFlow also contains many supporting features. For example, TensorBoard, which allows users to visually monitor the training process, underlying computational graphs, and metrics for purposes of debugging runs and evaluating model performance. Tensor board is the unified visualization framework for Tensorflow and Keras.

Keras is a high-level API that runs on top of TensorFlow. Keras furthers the abstractions of TensorFlow by providing a simplified API intended for building models for common use cases. The driving idea behind the API is being able to translate from idea to a result in as little time as possible.

Benefits of TensorFlow

TensorFlow can be used to develop models for various tasks, including natural language processing, image recognition, handwriting recognition, and different computational-based simulations such as partial differential equations.

The key benefits of TensorFlow are in its ability to execute low-level operations across many acceleration platforms, automatic computation of gradients, production-level scalability, and interoperable graph exportation. By providing Keras as a high-level API and eager execution as an alternative to the dataflow paradigm on TensorFlow, it’s always easy to write code comfortably.

As the original developer of TensorFlow, Google still strongly backs the library and has catalyzed the rapid pace of its development. For example Google has created an online hub for sharing the many different models created by users.

TensorFlow Specific Business Use Cases

- Image processing and video detection. Airplane manufacturing giant Airbus is using TensorFlow to extract and analyze information from satellite images to deliver valuable real-time information to clients.

- Time series algorithms. Kakao uses TensorFlow to predict the completion rate of ride-hailing requests.

- Tremendous scale capabilities. NERSC scaled a scientific deep learning application to more than 27,000 NVIDIA V100 Tensor Core GPUs using TensorFlow.

- Modeling. Using TensorFlow for deep transfer learning and generative modeling, PayPal has been able to recognize complex, temporarily varying fraud patterns while improving the experience of legitimate customers through expedited customer identification.

- Text recognition. SwissCom’s custom-built TensorFlow model improved business by classifying text, determining the intent of customers upon receiving calls.

- Tweet prioritization. Twitter used TensorFlow to build its Ranked Timeline, ensuring that users don’t miss their most important tweets, even when following thousands of users.

Why TensorFlow Matters to You

Data scientists

The many different available routes to develop models with TensorFlow means that the right tool for the job is always available, expressing innovative ideas and novel algorithms as quickly as possible. As one of the most common libraries for developing machine learning models, it’s typically easy to find TensorFlow code from previous researchers when trying to replicate their work, preventing the loss of time to boilerplate and redundant code.

Software developers

TensorFlow can run on a wide variety of common hardware platforms and operating environments. With the release of TensorFlow 2.0 in late 2019, it’s even easier to deploy TensorFlow models on a greater variety of platforms. The interoperability of models created with TensorFlow means that deployment is never a difficult task.

TensorFlow and NVIDIA

Graphics processing units, or GPUs, with their massively parallel architecture consisting of thousands of small efficient cores, can launch thousands of parallel threads simultaneously to supercharge compute-intensive tasks.

A decade ago, researchers discovered that GPUs are very adept at matrix operations, as well as algebraic calculations, and deep learning relies heavily on both of them.

TensorFlow runs up to 50% faster on the latest NVIDIA Pascal GPUs and scales well across GPUs. Now you can train the models in hours instead of days.

TensorFlow is written both in optimized C++ and the NVIDIA® CUDA® Toolkit, enabling models to run on GPU at training and inference time for massive speedups.

TensorFlow GPU support requires several drivers and libraries. To simplify installation and to avoid library conflicts, it’s recommended to leverage a TensorFlow Docker image with GPU support. This set-up only requires the NVIDIA GPU drivers and the installation of NVIDIA-docker. Users can pull containers from NGC (NVIDIA GPU Cloud) preconfigured with pretrained models and TensorFlow library support.

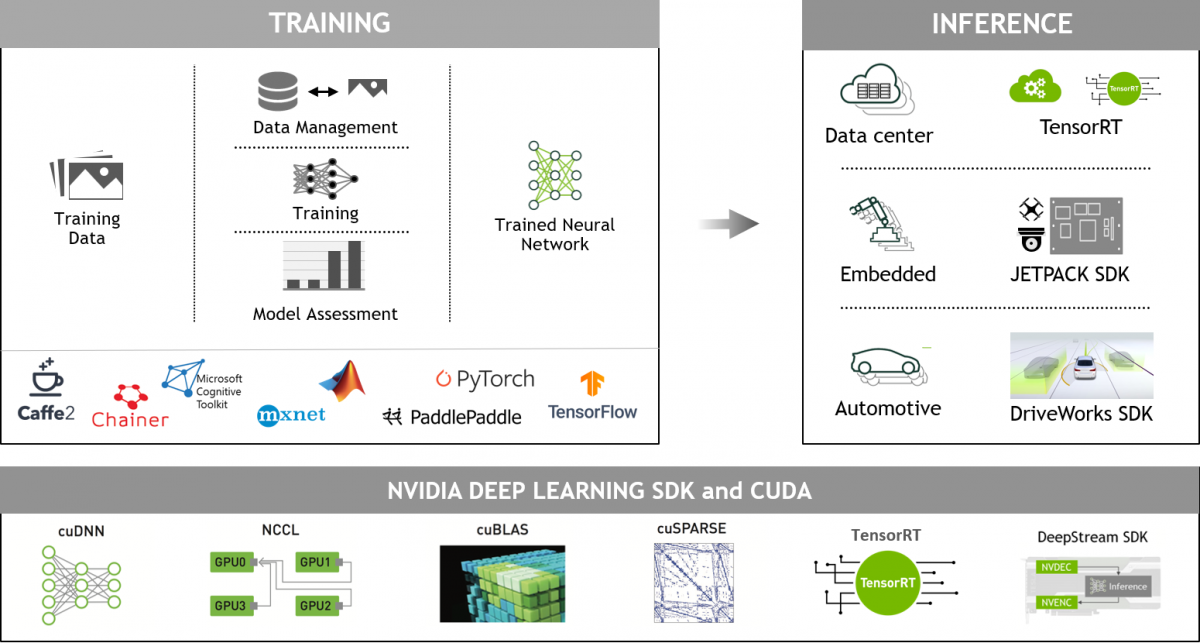

NVIDIA Deep Learning for Developers

GPU-accelerated deep learning frameworks offer flexibility to design and train custom deep neural networks and provide interfaces to commonly used programming languages such as Python and C/C++. Widely used deep learning frameworks such as MXNet, PyTorch, TensorFlow, and others rely on NVIDIA GPU-accelerated libraries to deliver high-performance, multi-GPU accelerated training.

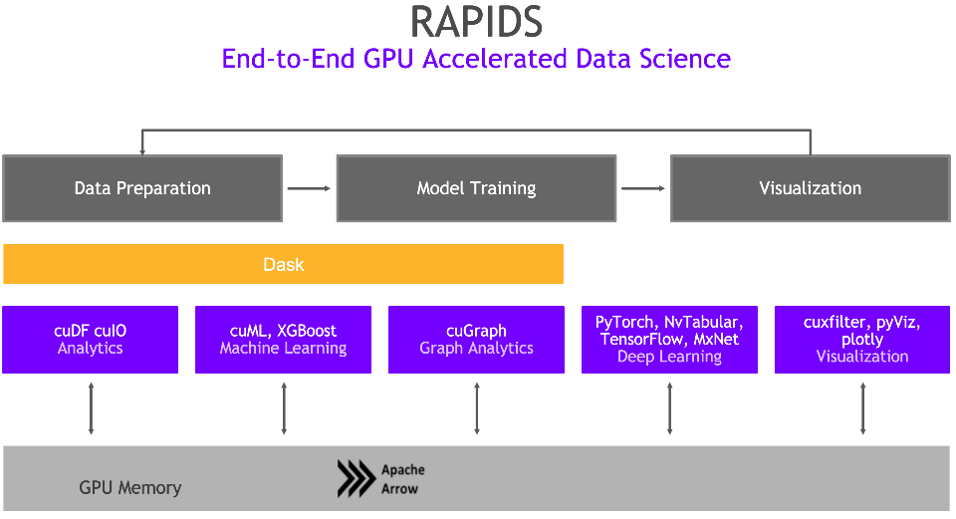

NVIDIA GPU-Accelerated, End-to-End Data Science

The NVIDIA RAPIDS™ suite of open-source software libraries, built on CUDA-X AI, gives you the ability to execute end-to-end data science and analytics pipelines entirely on GPUs. It relies on NVIDIA CUDA primitives for low-level compute optimization, but exposes that GPU parallelism and high-bandwidth memory speed through user-friendly Python interfaces.

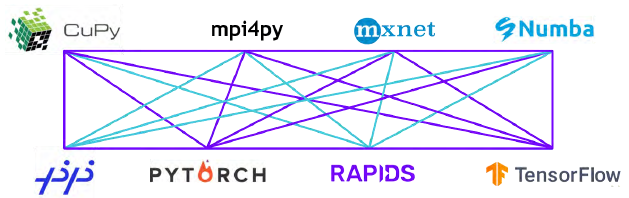

With the RAPIDS GPU DataFrame, data can be loaded onto GPUs using a Pandas-like interface, and then used for various connected machine learning and graph analytics algorithms without ever leaving the GPU. This level of interoperability is made possible through libraries like Apache Arrow and allows acceleration for end-to-end pipelines—from data prep to machine learning to deep learning.

RAPIDS supports device memory sharing between many popular data science libraries. This keeps data on the GPU and avoids costly copying back and forth to host memory.

Next Steps

- Learn how to get started with GPU-Accelerated TensorFlow

- Running GPU-accelerated TensowFlow in a Container

- Read NVIDIA Developer blogs about TensorFlow

- Find out about Accelerating TensorFlow on NVIDIA A100 GPUs

- Read the TensorFlow User Guide