Computer vision defines the field that enables devices to acquire, process, understand, and analyze digital images and videos and extract useful information.

Computer Vision

What is Computer Vision?

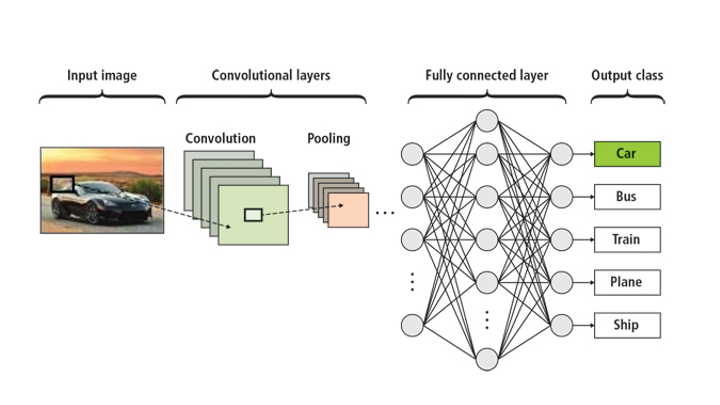

Computer vision has the primary goal of first understanding the content of videos and still images; it formulates useful information from them to solve an ever-widening array of problems. As a sub-group of artificial intelligence (AI) and deep learning, computer vision trains convolutional neural networks (CNNs) to develop human vision capabilities for applications. Computer vision can include specific training of CNNs for segmentation, classification, and detection using images and videos for data.

Convolutional neural networks (CNN) can perform segmentation, classification, and detection for a myriad of applications:

- Segmentation: Image segmentation is about classifying pixels to belong to a certain category, such as a car, road, or pedestrian. It’s widely used in self-driving vehicle applications, including the NVIDIA DRIVE™ software stack, to show roads, cars, and people. Think of it as a visualization technique that makes what computers do easier to understand for humans.

- Classification: Image classification is used to determine what’s in an image. Neural networks can be trained to identify dogs or cats, for example, or many other things with a high degree of precision.

- Detection: Image detection allows computers to localize where objects exist. In many applications, the CNN puts rectangular bounding boxes around the region of interest that fully contain the object. A detector might also be trained to see where cars or people are within an image.

Segmentation, Classification, and Detection

| Segmentation | Classification | Detection |

| Good at delineating objects | Is it a cat or a dog? | Where does it exist in space? |

| Used in self-driving vehicles | Classifies with precision | Recognizes things for safety |

Why Does Computer Vision Matter?

Computer vision has numerous applications, including sports, automotive, agriculture, retail, banking, construction, insurance, and beyond. AI-driven machines of all types are becoming powered with eyes like ours, thanks to convolutional neural networks (CNNs)—the image crunchers now used by machines to identify objects. CNNs are today’s eyes of autonomous vehicles, oil exploration, and fusion energy research . They can also help spot diseases quickly in medical imaging and save lives.

Traditional computer vision and image processing techniques have been used over the decades in numerous applications and research work. However, the advent of modern AI techniques using artificial neural networks that enable higher performance accuracy, and strides in high-performance computing from GPUs that enable superhuman accuracy have led to widespread adoption across industries like transportation, retail, manufacturing, healthcare, and financial services.

Whether traditional or AI-based, computer vision systems can be better than humans at classifying images and videos into finely discrete categories and classes, like minute changes over time in medical computerized axial tomography or CAT scans. In this sense, computer vision automates tasks that humans could potentially do, but with far greater accuracy and speed.

With the wide range of current and potential applications, it isn’t surprising that growth projections for computer vision technologies and solutions are prodigious. One market research survey maintains this market will grow a stunning 47% annually through 2023, when it will reach $25 billion globally. In all of computer science, computer vision stands among the hottest and most active areas of research and development.

How Does Computer Vision Work?

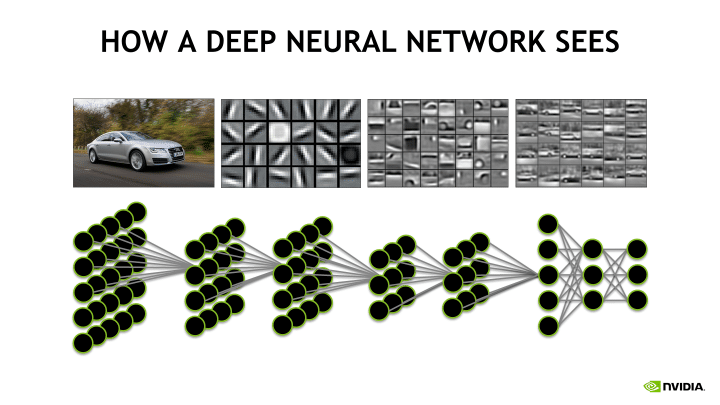

Computer vision analyzes images, and then creates numerical representations of what it ‘sees’ using a convolutional neural network (CNN). A CNN is a class of artificial neural network that uses convolutional layers to filter inputs for useful information. The convolution operation involves combining input data (feature map) with a convolution kernel (filter) to form a transformed feature map. The filters in the convolutional layers (conv layers) are modified based on learned parameters to extract the most useful information for a specific task. Convolutional networks adjust automatically to find the best feature based on the task. The CNN would filter information about the shape of an object when confronted with a general object recognition task but would extract the color of the bird when faced with a bird recognition task. This is based on the CNN’s understanding that different classes of objects have different shapes, but that different types of birds are more likely to differ in color than in shape.

Industry Use Cases for Computer Vision

Use cases of computer vision include image recognition, image classification, video labeling, and virtual assistants. Some of the more popular and prominent use cases for computer vision include:

- Medicine. Medical image processing involves the speedy extraction of vital image data to help properly diagnose a patient, including rapid detection of tumors and hardening of the arteries. While computer vision cannot by itself be trusted to provide diagnoses, it is an invaluable part of modern medical diagnostic techniques, minimally reinforcing what physicians think and, increasingly, providing information physicians otherwise would not have seen.

- Autonomous vehicles. Another very active area of computer vision research, autonomous vehicles can be taken over entirely by computer vision solutions or their operations can be significantly enhanced. Common applications already at work include early warning systems in cars.

- Industrial uses. Manufacturing abounds with current and potential uses of computer vision solutions in support of manufacturing processes. Current uses included quality control wherein computer vision systems inspect parts and finished products for defects. In agriculture, computer vision systems use optical sorting to remove unwanted materials from food products.

Data scientists and computer vision

Python is the most popular programming language for machine learning (ML), and most data scientists are familiar with its ease of use and its large store of libraries—most of them free and open-source. Data scientists use Python in ML systems for data mining and data analysis, as Python provides support for a broad range of ML models and algorithms. Given the relationship between ML and computer vision, data scientists can leverage the expanding universe of computer vision applications to businesses of all types to extract vital information from stores of images and videos and augment data-driven decision-making.

Accelerating Convolutional Neural Networks using GPUs

Architecturally, the CPU is composed of just a few cores with lots of cache memory that can handle a few software threads at a time. In contrast, a GPU is composed of hundreds of cores that can handle thousands of threads simultaneously.

Because neural nets are created from large numbers of identical neurons, they’re highly parallel by nature. This parallelism maps naturally to GPUs, which provide a data-parallel arithmetic architecture and a significant computation speed-up over CPU-only training. This type of architecture carries out a similar set of calculations on an array of image data. The single-instruction, multiple-data (SIMD) capability of the GPU makes it suitable for running computer vision tasks, which often involve similar calculations operating on an entire image. Specifically, NVIDIA GPUs significantly accelerate computer vision operations, freeing up CPUs for other jobs. Furthermore, multiple GPUs can be used on the same machine, creating an architecture capable of running multiple computer vision algorithms in parallel.

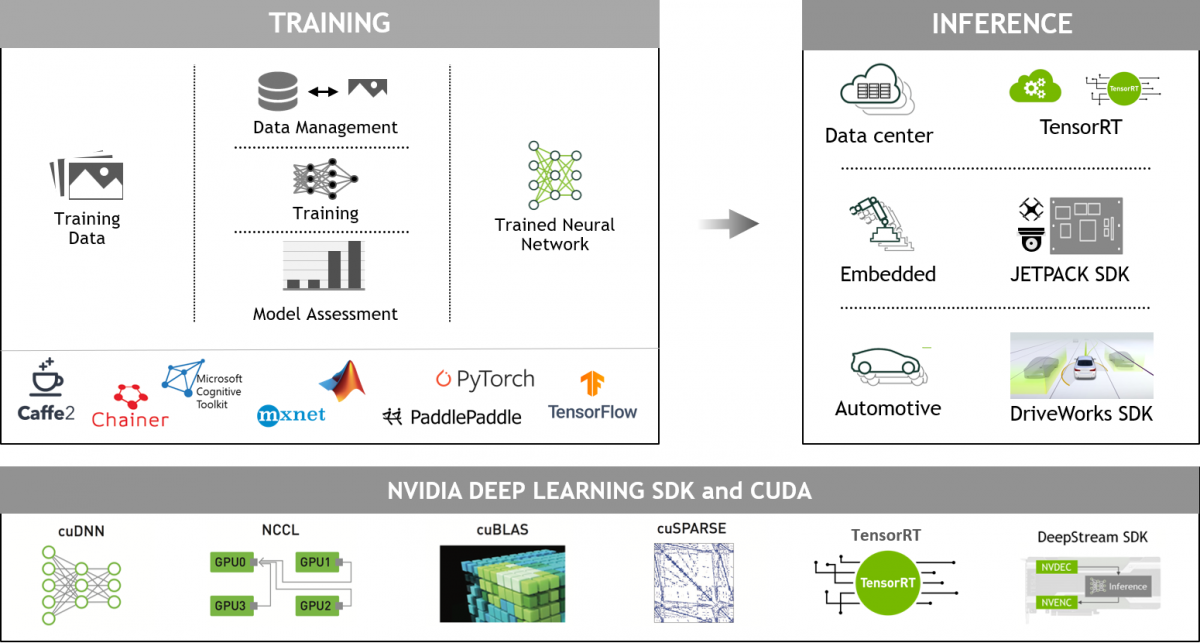

NVIDIA GPU-Accelerated Deep Learning Frameworks

GPU-accelerated deep learning frameworks provide interfaces to commonly used programming languages such as Python. They also provide flexibility to easily create and explore custom CNNs and DNNs, while delivering the high speed needed for both experiments and industrial deployment. NVIDIA CUDA-X AI accelerates widely-used deep learning frameworks such as Caffe, The Microsoft Cognitive Toolkit (CNTK), TensorFlow, Theano, and Torch, as well as many other machine learning applications. The deep learning frameworks run faster on GPUs and scale across multiple GPUs within a single node. To use the frameworks with GPUs for convolutional neural network training and inference processes, NVIDIA provides cuDNN and TensorRT™ respectively. cuDNN and TensorRT provide highly tuned implementations for standard routines such as convolution, pooling, normalization, and activation layers.

Click here for a step-by-step NVCaffe installation and usage guide. A fast C++/CUDA implementation of convolutional neural networks can be found here.

To develop and deploy a vision model in no-time, NVIDIA offers the DeepStream SDK, for vision AI developers. It also includes TAO Toolkit to create accurate and efficient AI models for computer vision domain.

Next Steps

For a more technical deep dive and news on other computer vision topics, including CNNs, check out our developers site.

To learn more:

- Computer vision and image processing algorithms are computationally intensive. With CUDA acceleration, applications can achieve interactive video frame-rate performance. The Computer Vision Solutions Landing Page highlights our work in computer vision and how we can enable the entire workflow.

- The NVIDIA Jetson Nano™ Developer Kit is a small, powerful computer that lets you run multiple neural networks in parallel for applications like image classification, object detection, segmentation, and speech processing in an easy-to-use platform that runs in as little as 5 watts.

- NVIDIA provides optimized software stacks to accelerate training and inference phases of the deep learning workflow. Learn more on the NVIDIA Deep Learning Home Page.

- NVIDIA’s Deep Learning Institute (DLI) offers courses such as Getting Started with Image Segmentation and Fundamentals of Deep Learning for Computer Vision.

For more technical information read:

- Learn how a CNN detects brain hemorrhages with accuracy rivaling experts

- Deep Learning in a Nutshell: Core Concepts

- Understanding Convolution in Deep Learning

- What's the Difference Between a CNN and an RNN? | The Official NVIDIA Blog

- Convolutional Neural Network (CNN)

- Blog: What Is Computer Vision?

- Computer Vision & Machine Vision blogs

- Building Image Segmentation Faster Using Jupyter Notebooks from NGC